U.S. Is Reportedly Using AI to Decide Where to Drop Bombs

If you've been wondering when our military will start deploying artificial intelligence on the battlefield, the answer is: it appears to already be doing that. The Pentagon has been using computer vision algorithms to help identify targets for airstrikes, according to a report from Bloomberg News. Just recently, those algorithms were used to help carry out more than 85 airstrikes as part of a mission in the Middle East.

The bombings in question, which took place in various parts of Iraq and Syria on Feb. 2, “destroyed or damaged rockets, missiles, drone storage and militia operations centers among other targets,” Bloomberg reports. They were part of an organized response by the Biden administration to the January drone attack in Jordan that killed three U.S. service members. The government has blamed Iranian-backed operatives for the attack.

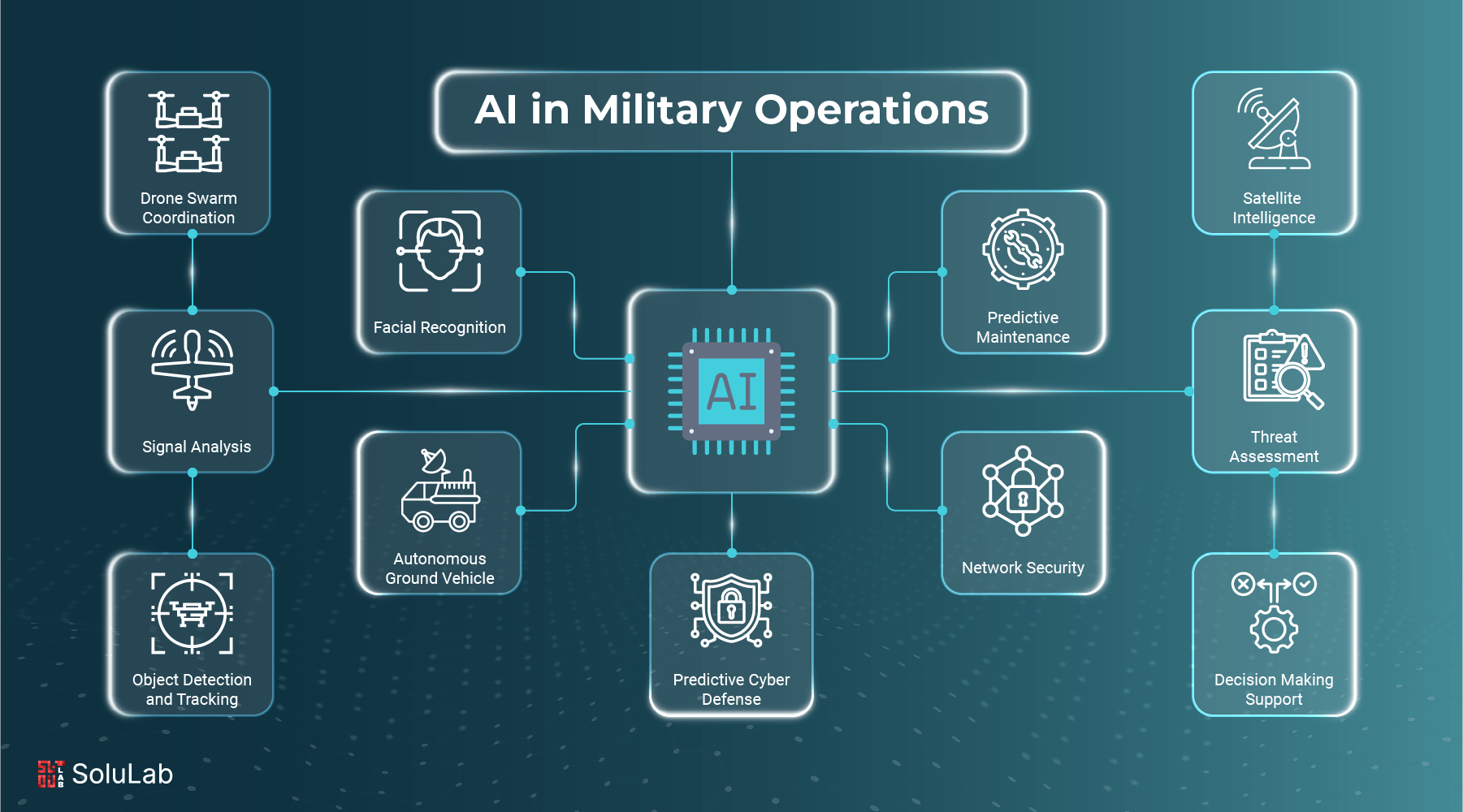

AI in Warfare

Bloomberg quotes Schuyler Moore, chief technology officer for U.S. Central Command, who said, of the Pentagon’s AI use: “We’ve been using computer vision to identify where there might be threats.” Computer vision, as a field, revolves around training algorithms to identify particular objects visually. The algorithms that helped with the government’s recent bombings were developed as part ofProject Maven, a program designed to spur new greater use of automation throughout the DoD. Maven was originally launched in 2017.

Controversy and Concerns

The use of AI for “target” acquisition would appear to be part of a growing trend—and an arguably disturbing one, at that. Late last year it was reported that Israel was using AI software to decide where to drop bombs in Gaza. The program can suggest as many as 200 targets in a period of just 10-12 days. Israelis also say that the targeting is just the first part of a broader review process, which can involve human analysts.

If using software to identify bombing targets would seem like a process prone to horrible mistakes, historically speaking, the U.S. hasn’t been particularly good at figuring out where to drop bombs. Previous administrations have been prone to confuse wedding parties with terrorist hideouts. The argument for AI involvement is that it reduces human culpability in such instances.

It's a controversial and complex topic, raising ethical questions surrounding the use of AI in warfare.