Meta is reportedly testing its first in-house AI training chip

A Big Tech company is making waves in the AI development space. Meta, the protagonist in this now-familiar tale, is reportedly testing its first in-house chip for AI training, as reported by Reuters. This move by Meta aims to lower infrastructure costs significantly and reduce its reliance on NVIDIA.

Reducing Dependence on NVIDIA

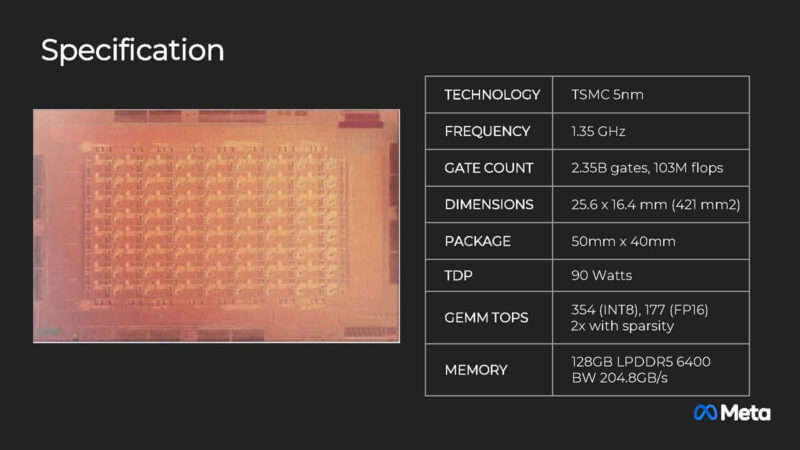

The dedicated accelerator chip deployed by Meta is tailored for AI tasks, making it more power-efficient compared to general-purpose NVIDIA GPUs. The company initiated a small-scale deployment of this specialized chip after completing its first "tape-out," marking a milestone in the chip's development process.

Meta Training and Inference Accelerator (MTIA) Series

The AI chip is part of Meta's MTIA series, a family of custom in-house silicon designed for generative AI, recommendation systems, and advanced research. Meta had previously used an MTIA chip for inference processes in AI models, such as those powering Facebook and Instagram news feed recommendations. Now, the company plans to leverage the new training silicon for these processes as well.

Future Applications

Meta's long-term strategy involves using the dedicated AI chips for generative products like the Meta AI chatbot. By starting with recommendation systems, Meta aims to expand the application of these chips to more advanced AI functionalities.

Shifting Priorities

This move by Meta signifies a shift in its strategy, especially considering its significant orders for GPUs from NVIDIA in the past. The company's decision to develop its in-house AI chips comes after a previous endeavor in inference silicon faced challenges during a test deployment.