Stanford professor admits ChatGPT added false information to his legal filing

The use of AI tools in legal and academic fields is under increased scrutiny following an acknowledgment by a prominent misinformation researcher regarding AI-generated inaccuracies in a court document.

The core incident

Stanford Social Media Lab founder Jeff Hancock has admitted to utilizing ChatGPT’s GPT-4o model to create citations for a legal declaration, which led to the inclusion of fabricated references.

Technical details and impact

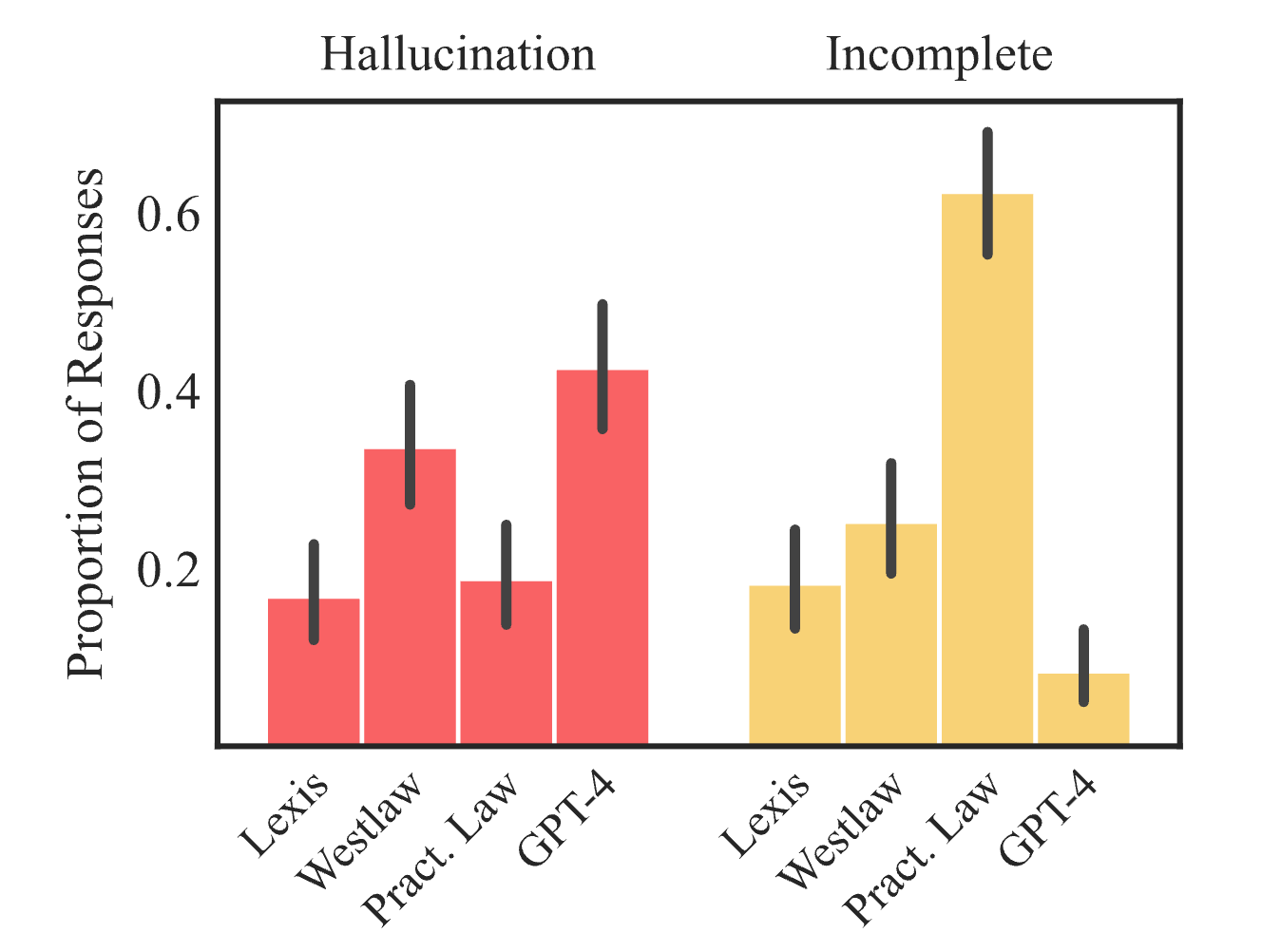

AI "hallucinations," where AI models produce believable yet false information, resulted in incorrect citations being incorporated into the legal paperwork.

Defense and clarification

Hancock asserts that the citation errors do not compromise the key arguments put forth in his declaration.

Looking ahead

This event acts as a cautionary example of the limitations of AI tools in professional and legal environments, especially when precision and validation are crucial. It could potentially influence future regulations concerning the use of AI in legal documentation and academic research, prompting discussions about disclosure and verification protocols when AI tools are employed in professional documentation.

No hype. No doom. Just actionable resources and strategies to accelerate your success in the era of AI.

AI is advancing rapidly, and we are here to ensure you stay up to date. Sign up for our newsletter to receive the latest AI news, research, tools, and expert-authored prompts & playbooks.