AI model collapse: How and why hallucinations might worsen

We’ve been concerned about ChatGPT and other AI models hallucinating information since the former gained popularity. The infamous incidents of AI generating inaccurate content are a clear example of this issue. While AI companies are working to enhance the accuracy of their chatbots, the problem of hallucinations still persists.

The Risk of Hallucinations

A recent study focusing on ChatGPT o3 and o4-mini, OpenAI’s latest reasoning models, revealed that these models tend to hallucinate even more than their predecessors. This underscores the importance of verifying information provided by AI chatbots and requesting sources for their claims.

The Concept of AI Model Collapse

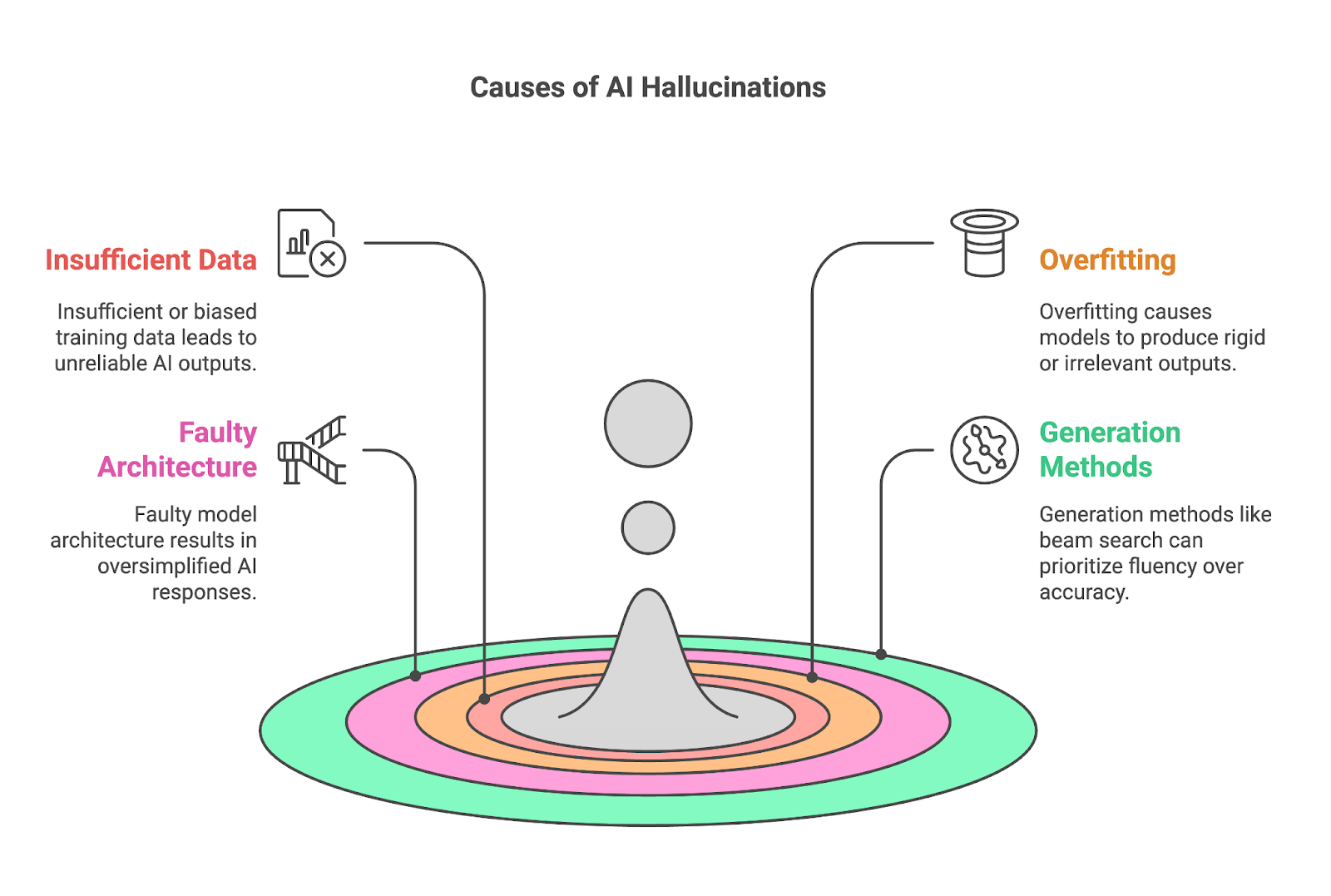

AI model collapse is a concerning phenomenon that poses risks in the development of AI technologies. Some AI models may deteriorate in performance over time, leading to potentially disastrous consequences. The collapse of AI models can result in the generation of unreliable and misleading information, impacting various aspects of everyday life.

%20(6).png)

An opinion piece from The Register highlighted instances where users experienced AI tools providing inaccurate data sourced from unreliable sources. This phenomenon, known as Garbage In/Garbage Out (GIGO), reflects the gradual loss of accuracy, diversity, and reliability in AI outputs.

The Impact on Everyday Life

AI model collapse poses significant challenges, particularly in critical areas such as finance and business operations. The reliance on AI technologies for automated tasks like customer support increases the risk of encountering misleading information and erroneous outputs.

While efforts like Retrieval-Augmented Generation (RAG) aim to improve the accuracy of AI-generated responses by accessing external knowledge sources, the potential for misleading reports and data breaches still exists. The need for transparency and vigilance in utilizing AI technologies is crucial to mitigate the risks associated with AI model collapse.

Addressing the Issue

To combat AI model collapse, it is essential to train frontier models using human-generated content rather than synthetic data. This approach can help enhance the reliability and accuracy of AI systems, reducing the likelihood of hallucinations and misinformation.

As the debate around AI model collapse continues, it is imperative for companies and users to remain vigilant and prioritize the integrity of AI-generated content. By fostering a culture of accountability and transparency in AI development, we can mitigate the potential impact of AI model collapse on society.