Some AI companies face a new accusation: 'Openwashing' | The Star

There’s no agreed-upon definition of what open source artificial intelligence actually means. And some are accusing AI companies of ‘openwashing’ – using the ‘open source’ term disingenuously to make themselves look good.

There’s a big debate in the tech world over whether artificial intelligence models should be “open source”. Elon Musk, who helped found OpenAI in 2015, sued the startup and its CEO, Sam Altman, on claims that the company had diverged from its mission of openness. The Biden administration is investigating the risks and benefits of open source models.

What is Openwashing?

Openwashing is an accusation against some AI companies that they are using the “open source” label too loosely. Proponents of open source AI models say they’re more equitable and safer for society, while detractors say they are more likely to be abused for malicious intent.

In a blog post on Open Future, a European think tank supporting open sourcing, Alek Tarkowski wrote, “As the rules get written, one challenge is building sufficient guardrails against corporations’ attempts at ‘openwashing’.”

Issues with Open Source AI Models

Last month the Linux Foundation, a nonprofit that supports open-source software projects, cautioned that “this ‘openwashing’ trend threatens to undermine the very premise of openness – the free sharing of knowledge to enable inspection, replication, and collective advancement”.

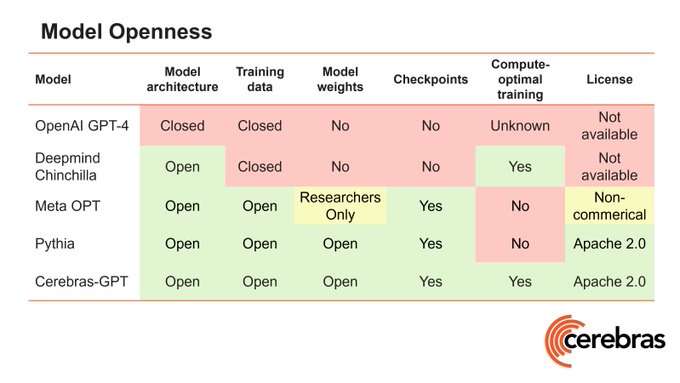

Organizations that apply the label to their models may be taking very different approaches to openness. For example, OpenAI, the startup that launched the ChatGPT chatbot in 2022, discloses little about its models (despite the company’s name). Meta labels its LLaMA 2 and LLaMA 3 models as open source but puts restrictions on their use.

The Challenges of Replicating Open Source AI Models

The main reason is that while open source software allows anyone to replicate or modify it, building an AI model requires much more than code. Only a handful of companies can fund the computing power and data curation required. That’s why some experts say labeling any AI as “open source” is at best misleading and at worst a marketing tool.

Efforts to create a clearer definition for open source AI are underway. Researchers at the Linux Foundation in March published a framework that places open source AI models into various categories. And the Open Source Initiative, another nonprofit, is trying to draft a definition.

But doubts remain about the feasibility of truly open source AI. The prohibitive resource requirements for building AI models, are seen as a significant challenge by experts.

Source: The New York Times