Israeli military creating ChatGPT-like AI tool targeting Palestinians

Israel’s military is in the process of developing an advanced artificial intelligence tool similar to ChatGPT. This tool is being trained on Arabic conversations that were obtained through the surveillance of Palestinians living under occupation. These findings were a result of a joint investigation by The Guardian, Israeli-Palestinian publication +972 Magazine, and Hebrew-language outlet Local Call.

Development of the AI Tool by Unit 8200

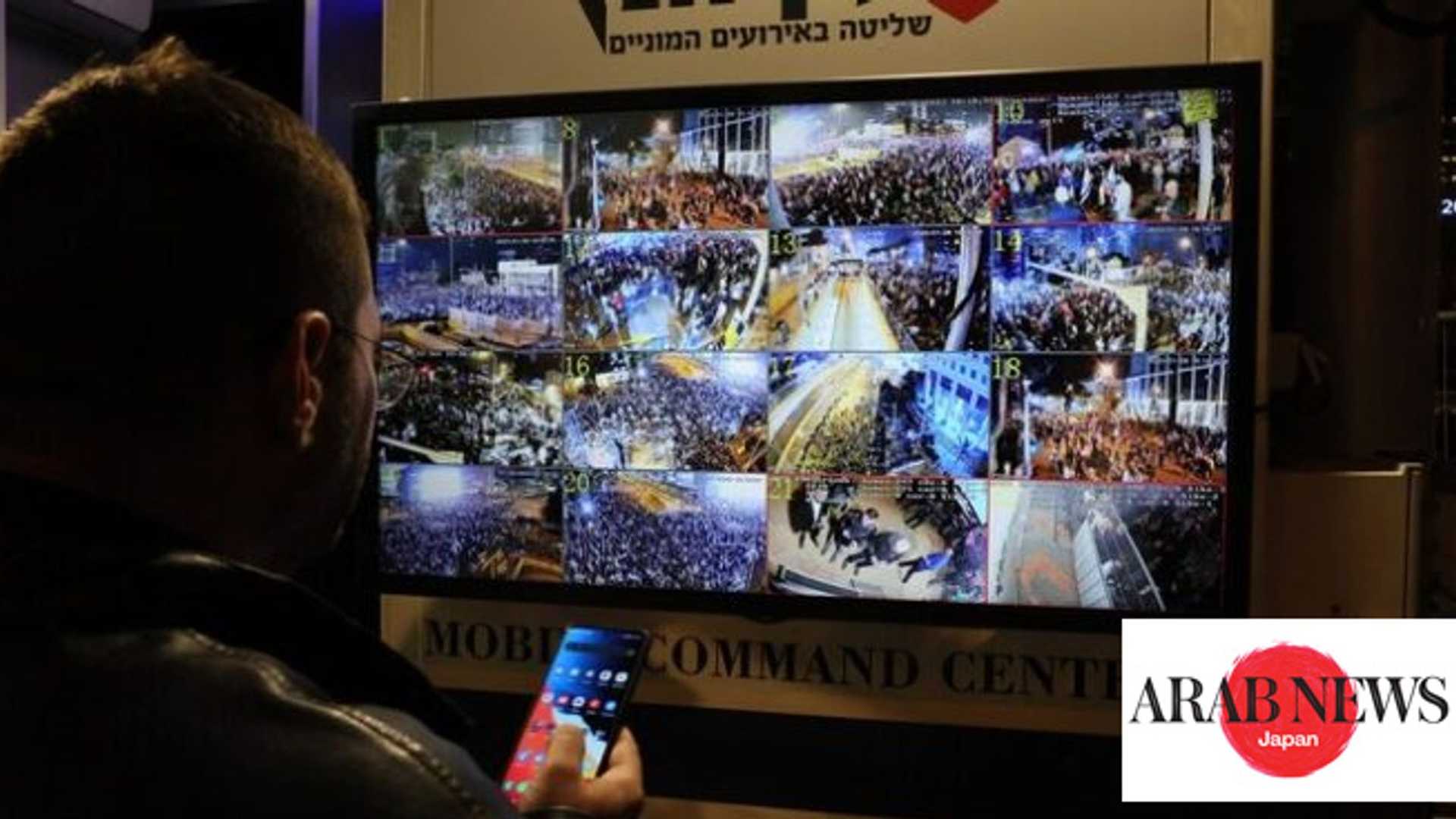

The AI tool is currently under development by the Israeli army’s secretive cyber warfare Unit 8200. The division is ensuring that the AI tool understands colloquial Arabic by feeding it extensive amounts of phone calls and text messages between Palestinians that were obtained through surveillance.

Use Cases and Concerns

Through the investigation, it was revealed that Unit 8200 had previously utilized smaller-scale machine learning models. AI has the power to amplify control, enabling activities beyond preventing shooting attacks, such as tracking human rights activists and monitoring Palestinian construction in specific areas.

Unit 8200's AI tools, including The Gospel and Lavender, played essential roles during conflicts, aiding in identifying potential targets and making predictions based on intercepted communications. In the pursuit of leveraging AI advancements, the Israeli army explored the potential military applications of generative AI, particularly after the release of ChatGPT's large language model to the public.

Challenges and Future Prospects

However, integration challenges arose as ChatGPT's parent company, OpenAI, declined Unit 8200's direct access to its LLM. This led Unit 8200 to develop its own program, emphasizing the need for processing spoken Arabic in various dialects.

To address this gap, Unit 8200 began recruiting experts from private tech companies as reservists. These experts played essential roles in centralizing spoken Arabic texts for training the model, which eventually consisted of approximately 100 billion words.

Concerns and Criticisms

Zach Campbell from Human Rights Watch and Nadim Nashif from the Palestinian digital rights group 7amleh raised concerns about the potential misuse of such AI tools. They highlighted the risks associated with collecting personal data to train AI models and how it could lead to incriminating innocent individuals. This data collection raises ethical concerns about privacy and human rights violations.

Nashif emphasized that Palestinians have become subjects in Israel’s AI development process, which is being used to maintain control and domination over the population. This raises significant questions about the continuous violation of Palestinian digital rights and the broader implications of weaponizing AI technologies.