ChatGPT struggles to recognize reproducible science | Knowledge ...

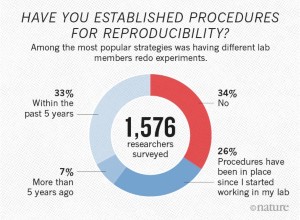

The quality of answers provided by ChatGPT matters with over 100 million users and approximately 1 billion monthly website visits. Large language models have the potential to drive scientific breakthroughs by processing vast amounts of information in seconds and learning from data at a scale and speed unattainable by humans, but recognizing reproducibility, a core aspect of high-quality science, remains a challenge.

Study on ChatGPT and Scientific Reproducibility

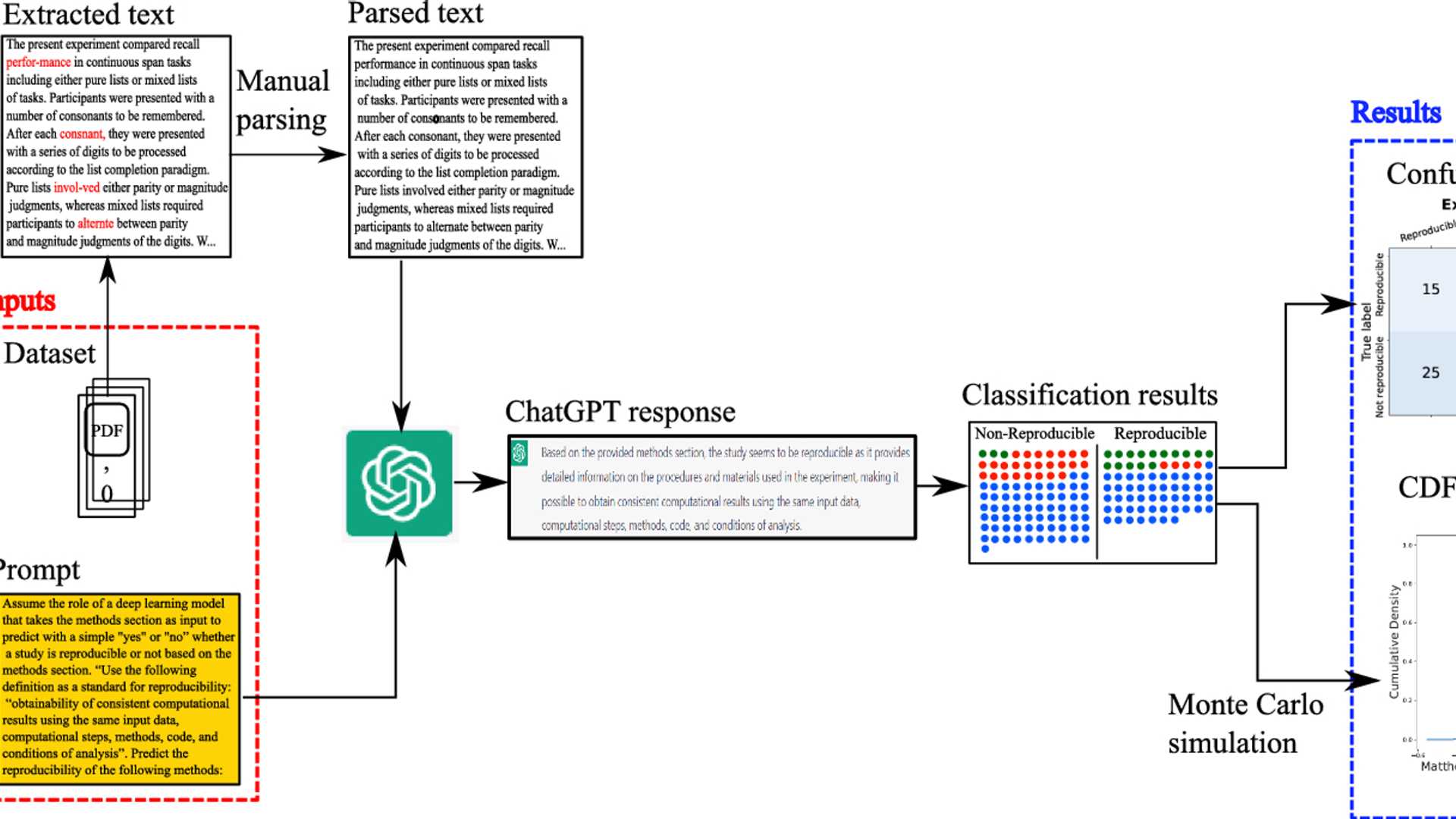

Our study investigates the effectiveness of ChatGPT (GPT\(-\)3.5) in evaluating scientific reproducibility, a critical and underexplored topic, by analyzing the methods sections of 158 research articles. In our methodology, we asked ChatGPT, through a structured prompt, to predict the reproducibility of a scientific article based on the extracted text from its methods section.

The findings of our study reveal significant limitations: Out of the assessed articles, only 18 (11.4%) were accurately classified, while 29 (18.4%) were misclassified, and 111 (70.3%) faced challenges in interpreting key methodological details that influence reproducibility. Future advancements should ensure consistent answers for similar or same prompts, improve reasoning for analyzing technical, jargon-heavy text, and enhance transparency in decision-making.

Additionally, we suggest the development of a dedicated benchmark to systematically evaluate how well AI models can assess the reproducibility of scientific articles. This study highlights the continued need for human expertise and the risks of uncritical reliance on AI.

Access the Full Article and Dataset

This is a preview of subscription content, to check access.

Price excludes VAT (USA). Tax calculation will be finalized during checkout. Instant access to the full article PDF. Institutional subscriptions

Our full dataset, including the extracted methods sections and responses from ChatGPT to our prompt, is shown here. It is also accessible through our repository (https://bitbucket.org/nordlinglab/nordlinglab-reprod-chatgpt/).

References for Further Reading

- Sciences Engineering NA (2019) Medicine: reproducibility and replicability in science. National Academies Press, Washington, DC.

- Baker M (2016) 1,500 scientists lift the lid on reproducibility. Nature 533(7604):452–454.

- Melo Peralta T, Nordling TEM (2022) A literature review of methods for assessment of reproducibility in science. Research Square preprint