OpenAI's AI Detector for ChatGPT

ListenShareOpenAI let the genie out of the bottle when they released ChatGPT on November 30, 2022. Almost 2 years later, they’re considering offering the antidote.

Generative AI has taken our creativity to new heights led by ChatGPT, which showed us that artificial intelligence has the ability to generate human-like text. It captured the world’s imagination.

However, as with any transformative technology, there have been unintended consequences such as fake political propaganda to robot written thesis papers. AI-generated content has raised concerns about:

OpenAI's Approach

But does an OpenAI developed AI checker raise concerns about alienating existing ChatGPT users? Is a detector tool needed to discern human-written content?

OpenAI had previously tried building a detection tool with mixed results:

These numbers highlight the complexity of AI detection. Also, results were even worse for text less than 1,000 words. Free AI detector startups, and South Park, came to the rescue of businesses, teachers and professors around the world!

As AI content improved, sounding and looking more human with every iteration, a new technique was needed to flag AI text.

Technological Advancements

To detect its own AI-generated text, OpenAI resorted to leaving breadcrumbs in their generated text. Though not apparent to the human eye, their AI content detector is able to detect AI with 99.99% accuracy with this technique.

When we read text not written by a human, sometimes we just.. know. Though hard to put our finger on exactly why something sounds like it's been written by a robot, our human AI text detector can tell!

Future Implications

Large language models’ purpose was to write human-sounding and looking content. If those same AI models start deviating from that in order for their tool to detect itself, will it leave its core products worse off? Will it leave OpenAI as a trusted AI? Or the best AI? There is a delicate balance between improving detection capabilities and the quality of their core product, ChatGPT.

The key considerations: Is a detector for ChatGPT, created by OpenAI, the answer? It’s like a biopharma company offering the virus and then the cure for said virus. Poor example 😯.

Conclusion

As the founder of AI or Not, in my completely unbiased and humble opinion, 3rd party tools are the best bet to detect AI-generated content without compromising the language models themselves. Instead of focusing on a single model or modality, advanced AI can offer tools to detect all of them; AI for detecting AI.

Text is important to check for plagiarism and original content. Apologies to the professors and teachers tasked to check if something was produced by AI, but other modalities have caused worse outcomes.

Impact on Industries

A detector for different modalities can help with:

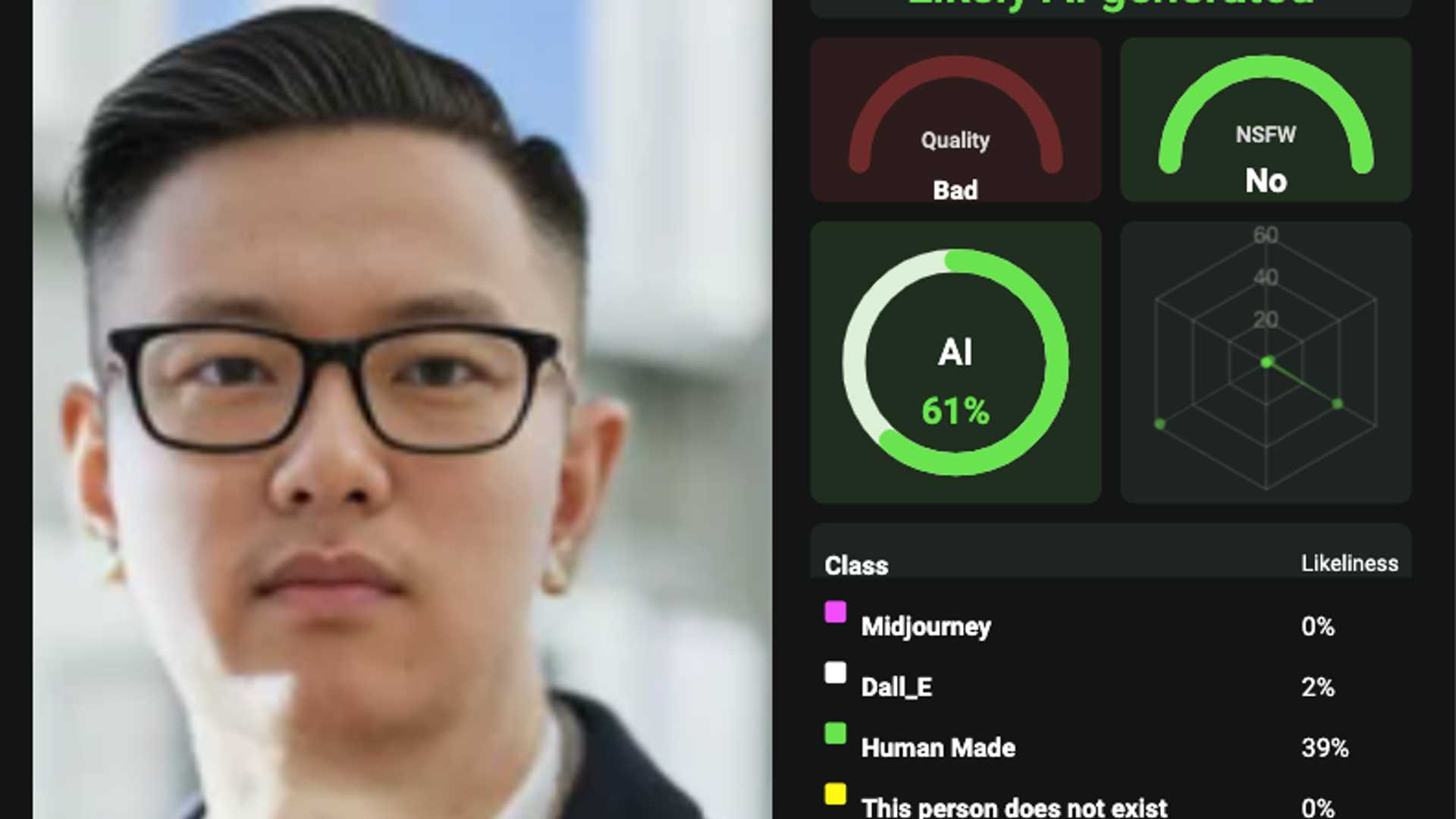

1. Image Detection

2. Audio Detection

3. Video Detection (emerging)

At AI or Not, we’ve seen firsthand which industries are being affected by the use of AI:

2. Journalism and Social Media

3. Cybersecurity

4. Insurance

Creators of AI and large language models are beginning to see the dark side of their technologies. Whether it's related to academic integrity or powering the latest attack from foreign entities, there have been unintended use cases.

This is no different from other technological advances offering new ways to do bad. OpenAI has taken steps for creating an AI detector for ChatGPT, including gpt-4. But the question remains if that’s the right path to take and how will it affect the core product and innovation. As we continue to adopt AI in new ways, there is a need for machine learning companies to create protections as well.

Showing companies and people around the world whether content is AI generated across modalities will be crucial to a trusted future.