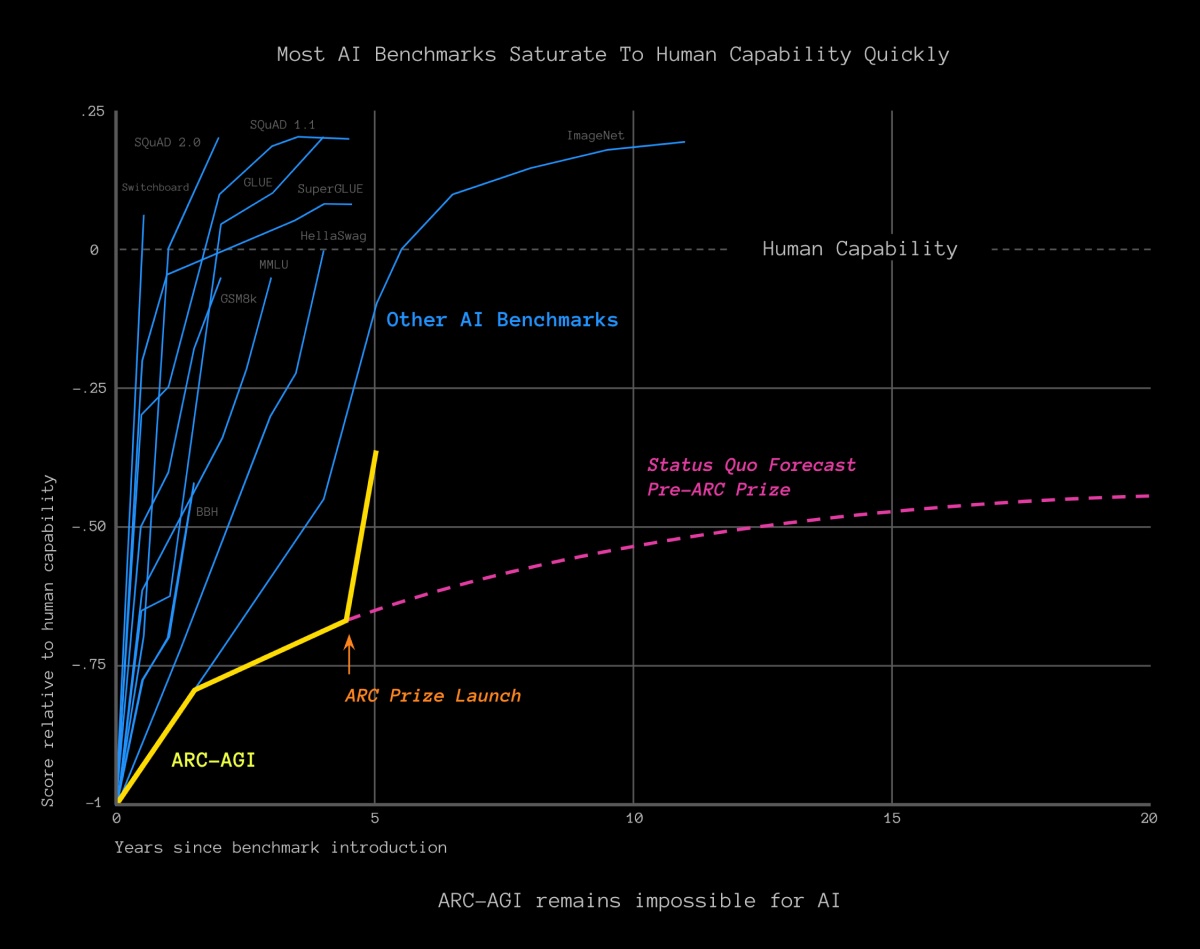

OpenAI's o3 System Achieves Human-Level Performance on ARC-AGI-Pub

On December 20, OpenAI's o3 system made a significant breakthrough by achieving human-level results on the ARC-AGI benchmark, scoring 85%. This score surpasses the previous best AI result of 55% and is on par with the average human performance. It also demonstrated proficiency in a challenging mathematics test.

Generalisation and Intelligence

The ARC-AGI test evaluates an AI system's ability to adapt to new scenarios with high sample efficiency. This capability to generalize from limited data is crucial for artificial general intelligence (AGI) and is considered a fundamental aspect of intelligence.

The benchmark comprises grid square problems that require the AI to identify patterns and rules to transform one grid into another. By learning from a few examples, the o3 model showcased remarkable adaptability in deriving generalizable rules.

Adaptation and Weak Rules

The o3 system's success in the ARC-AGI tasks indicates its capacity to find weak rules that can be succinctly expressed. By identifying the simplest rules to achieve a desired outcome, the model maximizes its adaptability to novel situations.

Unknown Potential

Despite its remarkable performance, many aspects of the o3 system remain undisclosed. OpenAI has maintained limited transparency, restricting access to a select group of researchers and institutions for early testing.

Further evaluation and exploration of o3's capabilities are necessary to determine its true potential compared to human adaptability. The eventual release of o3 will provide insights into its effectiveness and implications for the future of AI development.