Capabilities and alignment of LLM cognitive architectures - AI development

In this article, we will discuss the recent developments in AI research, focusing on the capabilities and alignment of LLM cognitive architectures. LLMs, or large language models, have great automatic language processing abilities but lack goal-directed agency, executive function, episodic memory or sensory processing. However, recent work has added all of these to LLMs, creating language model cognitive architectures (LMCAs) that can interact synergistically with each other, thereby enhancing the effective intelligence of LLMs.

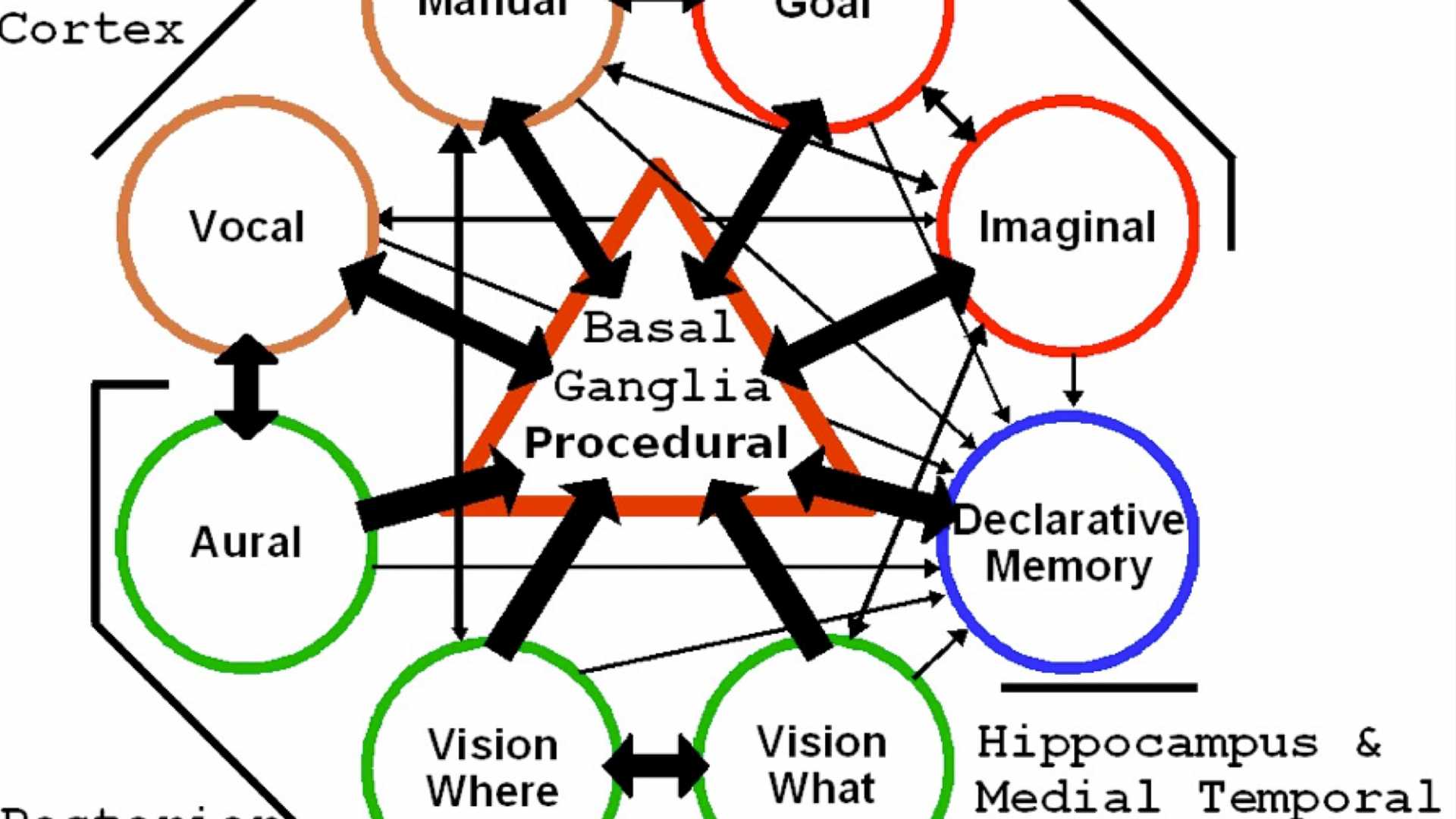

The LMCA approach makes an LLM the natural language cognitive engine at the center of a cognitive architecture. Cognitive architectures are computational models of human brain function, including separate cognitive capacities that work synergistically. AutoGPT, HuggingGPT, and similar script wrappers and tool extensions for LLMs are just the beginning of enhancing LLM capabilities, and there are low-hanging fruit and synergies that will add capability to LLMs.

The LMCA approach enables a natural language alignment (NLA) approach that pursues goals, performs extensive, iterative, goal-directed thinking, and balances ethical goals much like humans. These systems summarize their processing in English, which is an enormous change from the way these alignment problems have been approached. This approach also provides substantial benefits to initial alignment, corrigibility, and interpretability.

Although this approach relies heavily on calls to large language models, the computational costs are low for cutting-edge innovation. Individuals and businesses can therefore contribute to its progress, which may speed up the overall development of AGI. However, this approach may also shorten the timelines-to-AGI, which highlights the need for careful consideration of the potential impacts on alignment.

The LMCA approach follows the blueprint of human intelligence, which is an emergent property greater than the sum of our cognitive abilities. This approach may lead to human-plus level AGI, or even X-risk AI. By discussing the agentizing and extending of LLMs with prosthetic cognitive capacities, we can anticipate rather than lag behind technical innovation.