Generative AI: Can ChatGPT Leak Sensitive Data? | HackerNoon

In just 5 days, ChatGPT by OpenAI has become a worldwide sensation with 1 million users! Users can ask questions and get inspiration; some even claim it’s the new Google and that traditional search engines will become obsolete. Only time will tell. ChatGPT has become a part of our daily lives, with some users even addicted to it, while others still rely on Google for inspiration. The world of technology depends on data as fuel to drive the industry forward. Understanding the outcomes of decisions is crucial.

Nothing is secured at 100%; the bad news is that ChatGPT is not the most secure tool. We have to stay vigilant all the time; security starts with continuous awareness and training.

The Resourcefulness of ChatGPT

As a data engineer, let’s discover what the tool knows about us and how we can remain safe.

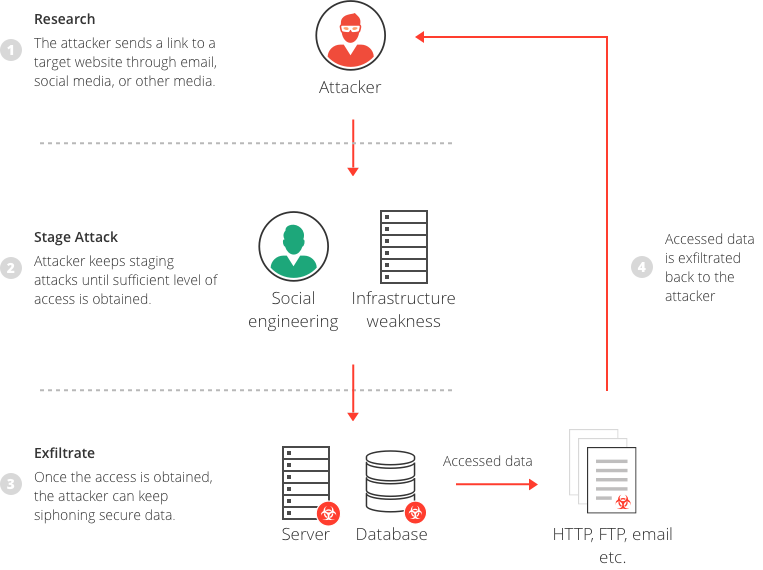

The tool is very resourceful as we can learn anything we want anytime. However, it may provide erroneous information and save more data than we can imagine! Organizations now rely on it for various tasks such as web content writing, brainstorming, and code review. It serves as an AI assistant to help developers write code and supports many other tasks. Some research shows that open-source and closed models like GPT can leak private data if tricked.

What happens when I enter data in the prompt? ChatGPT saves the data, the conversation history, and other information including the IP address, the mail we use, the type of device we use, the location, and any sensitive data we can enter in the prompt. If you are a business, it’s scary if you become a victim of data leakage! And around the world, no matter the hype in the industry, the tool was prohibited in some companies.

Data Privacy and Security Concerns

Here is the data ChatGPT knows about us and they are considered as personally identifiable information (PII): And the information is not exhaustive. If we go from a cloud perspective, the personal information received by ChatGPT is not different from any Software as a service app (SaaS).

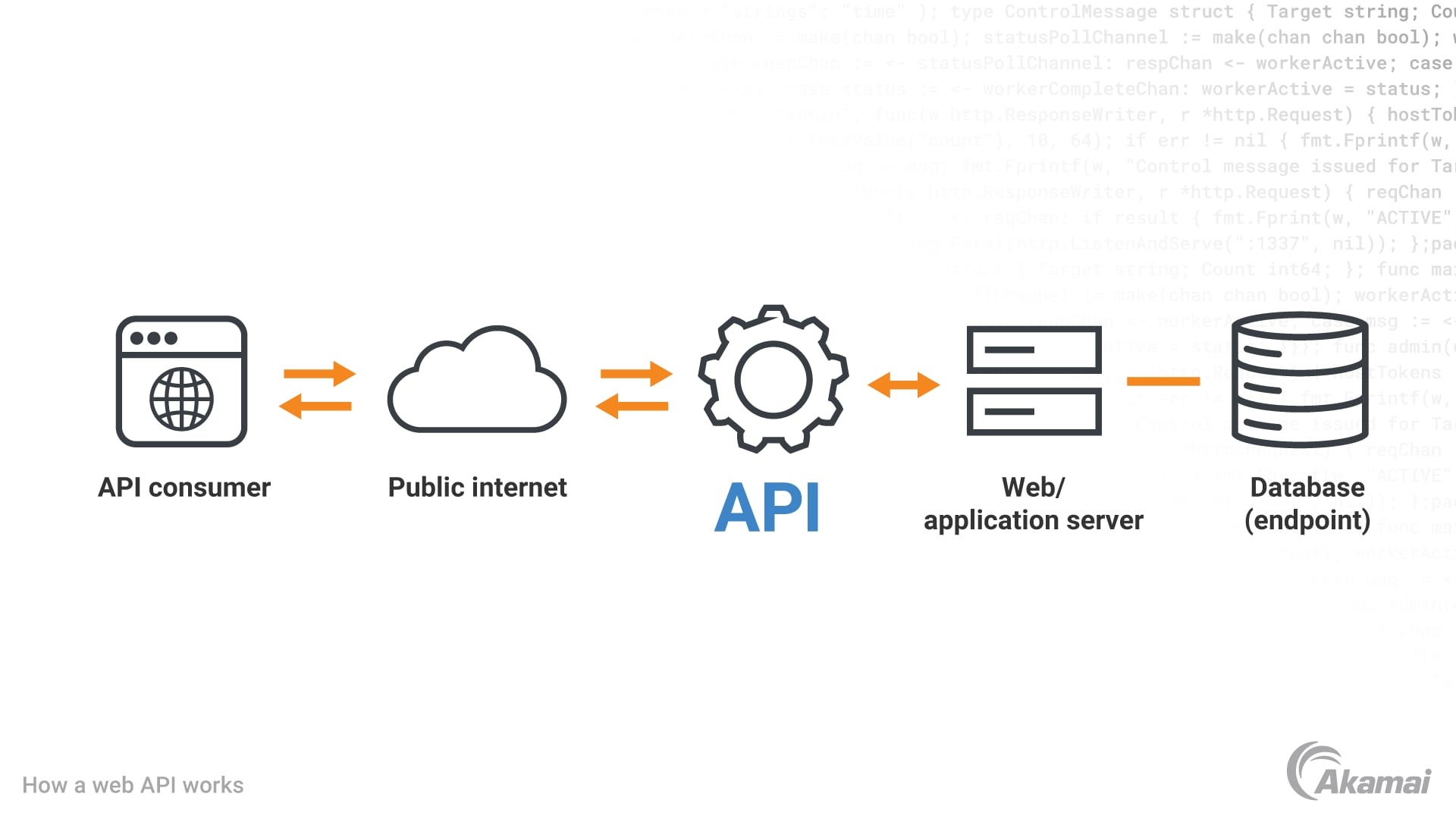

No matter the hype, Generative AI tools will always have some weaknesses, and bad threat actors will trick them into doing more damage. For companies, it’s dangerous if there are no better security policies, and anything can happen, resulting in a damaged reputation and bankruptcy! Customers trust only secured companies where they see their privacy protected. Nobody can trust any company in vain; security and privacy are precious. Since the advent of ChatGPT, we have seen a plethora of tools online integrating the APIS! Are they secure? What’s the solution to remain safe using the tool if APIs are integrated? Data is precious, the Open AI APIs are integrated into a plethora of tools, and the most popular is ChatGPT. With massive cyberattacks worldwide, data theft is inescapable! Threat actors can steal time without our notice.

In the industry, redaction APIs such as Redaction by Symbl AI and Redact by Pangea Cyber, can help developers to redact their data once they integrate OpenAI for better protection and data anonymization. Generative tools can secure themselves, and developers with less security skills should worry less thanks to security APIs they can integrate into their apps with a few lines of code.

This is how we can redact sensitive data with a GDPR-DRIVEN service in a few lines of code. Data privacy is precious; it’s important to remain safe online by avoiding sharing sensitive information in the prompt such as bank information, health information, personal contacts… Once privacy and security are compromised, it becomes difficult to repair the damage. Insecurity costs more than security and simple users online should remain vigilant to avoid any catastrophic data theft. For developers with the hunger to innovate with the OpenAI APIs to integrate into their apps, they should always look for GDPR services for data redaction; by doing so, they protect the users and their data. ChatGPT cannot secure itself no matter the level of security applied!