Meta's new era: AI image labelling on social media platforms

Meta, the parent company of Facebook, Instagram, and Threads, has made a groundbreaking announcement in the field of artificial intelligence. The tech giant will now apply labels to all AI-generated images on its various platforms. This move comes at a time when global elections are of significant importance, highlighting the challenge of distinguishing between human-created and synthetic content. The decision has been supported by Sir Nick Clegg, a former UK deputy prime minister who now holds a position as an executive at Meta.

The Labeling Process

Meta has already been marking photorealistic images produced through its Meta AI tool with "Imagined with AI" labels. In addition to this, the company is actively working on advanced tools to identify unique markers on AI-generated images sourced from platforms like Google, OpenAI, Microsoft, and Adobe. This labelling initiative is set to be implemented on Facebook, Instagram, and Threads in the near future. Sir Nick, who currently serves as Meta's president of global affairs, anticipates that this period will provide valuable insights into AI content creation and sharing, user preferences for transparency, and the ongoing development of these technologies. These insights will play a crucial role in defining industry standards and shaping Meta's future strategies.

Industry Collaboration and Standards

Meta has been collaborating with industry partners to establish universal technical standards for identifying AI-generated content. By leveraging these standards, the company's technology will be able to detect and label AI-generated images effectively across all languages. The urgency of Meta's decision is highlighted by the sheer volume of AI-generated images present online, with an estimated 15 billion such images uploaded in 2022 alone. While many of these images are harmless, a significant portion is deemed harmful, including fake explicit content and misinformation with political motives.

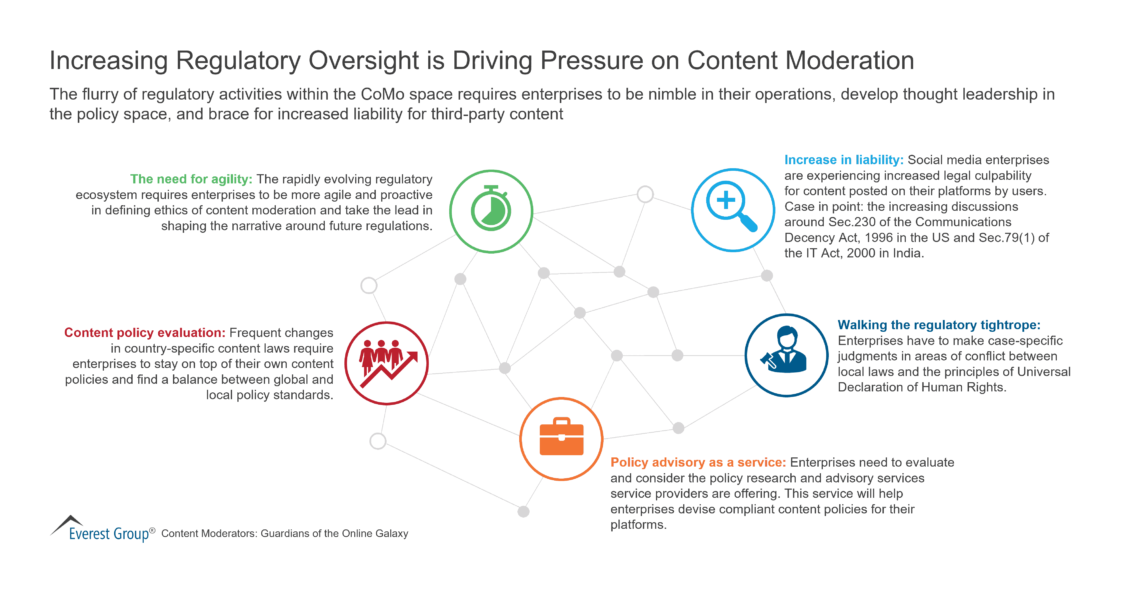

Regulatory Response and Implications

This move by Meta can also be seen as a response to the growing regulatory pressures faced by social media platforms. Legislations such as the UK's Online Safety Act, which criminalizes the uploading of fake explicit images without consent, have pushed companies to take proactive measures in safeguarding users. Lawmakers in the US have also criticized social media giants for their inadequate user protection measures, hinting at potential legislative actions in the future.

Meta's proactive stance is likely to set a precedent for other companies in the industry, encouraging them to adopt similar measures to combat the proliferation of AI-generated content. Although digital watermarking is considered a promising solution, its effectiveness is still debatable. Research teams have demonstrated that even watermarks embedded within an image's metadata can be easily removed, questioning the reliability of such methods. The true test of Meta's initiative will be witnessed in the months ahead, determining whether it leads to a tangible reduction in harmful AI-generated content.

Related topics:

Stop Press – AIBC Eurasia takes place in Dubai 25 – 27 February !

Charles Schwab’s Calculated Approach to Bitcoin ETFs (aibc.world)

Success of Bitcoin EFTS cryptocurrency sell-off – a week on – AIBC News