Meta Addresses Privacy Blunder With Meta AI Chatbot

In today's digital age, privacy concerns have become increasingly significant. Meta recently faced criticism due to its new venture into artificial intelligence, particularly regarding its management of user data. The company's AI chatbot app, which enables users to interact with an AI assistant, introduced a public "Discover" feed. This feature was intended to showcase shared interactions but quickly turned problematic when reports emerged of users unknowingly revealing personal information, ranging from medical inquiries to legal issues, to a wider audience.

According to Business Insider, many users unintentionally shared their chats without realizing that these interactions could be publicly viewed on the Discover feed. The lack of clear warnings and intuitive design left individuals exposed, with sensitive information exposed for strangers to see. This situation raised concerns among privacy advocates and tech enthusiasts, questioning Meta's dedication to safeguarding user data in its pursuit to excel in the AI industry.

A Design Flaw Exposed

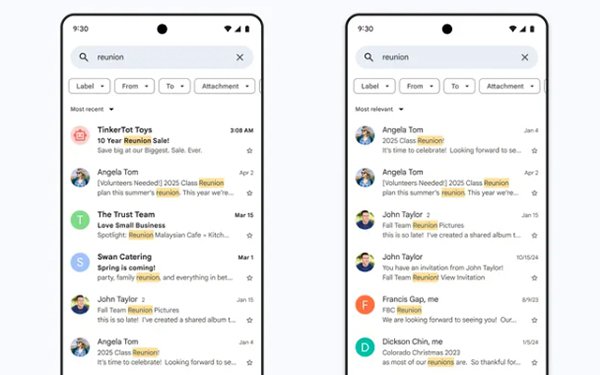

The core of the problem appears to stem from the app's user experience design, or rather, the lack thereof. Critics argue that Meta failed to incorporate standard safeguards, such as explicit opt-in processes or prominent notifications regarding the public nature of shared content. The functionality of the "share" button was ambiguous, leading users to believe their posts were private when, in reality, they were being broadcast to a broader audience on the Discover feed.

The Mozilla Foundation launched a public campaign urging Meta to rectify this flaw, emphasizing the inadvertent exposure of private chats without users' informed consent. The organization stressed the necessity for clearer design cues to prevent accidental oversharing, a sentiment shared by various tech communities. This incident highlights not merely a technical error but also the ethical responsibilities that tech giants bear when deploying AI tools that handle personal data.

Meta’s Response and Fixes

In light of the backlash, Meta has taken measures to address the situation. As per reports from Business Insider, the company introduced a new warning prompt that appears before users can post on the public feed. This update is aimed at ensuring individuals understand the visibility of their content, directly addressing the inadvertent disclosures that garnered public attention.

While this corrective action is a positive step, concerns persist regarding whether it is sufficient to rebuild trust. Privacy experts advocate for additional measures, such as defaulting conversations to private or providing users with more control over shared content. The Discover feed, initially designed to encourage engagement, has become a cautionary tale of the repercussions when user protection is not prioritized.

Broader Implications for AI Privacy

This controversy underscores a broader challenge facing the tech industry - striking a balance between the appeal of AI-driven functionalities and the necessity of privacy. Meta's misstep with the Discover feed is not an isolated incident but part of an ongoing trend where user data is compromised in the pursuit of market dominance.

As AI tools become more ubiquitous in everyday life, incidents like this serve as a wake-up call. Companies must invest in robust privacy frameworks and transparent communication to avoid alienating users. While Meta's warning prompt may address an immediate concern, the fundamental issue of trust in AI platforms lingers, necessitating more than quick fixes - it demands a fundamental reassessment of how personal data is managed in the era of artificial intelligence.