How to Build a Powerful and Intelligent Question-Answering System ...

In this tutorial, we will explore the process of constructing a robust question-answering system that leverages various tools and frameworks to enhance its capabilities.

Combining Strengths

The system we will build combines the strengths of Tavily Search API, Chroma, Google Gemini LLMs, and the LangChain framework. By integrating these tools, we create a pipeline that enables real-time web search, semantic document caching, and contextual response generation. LangChain's modular components, including RunnableLambda, ChatPromptTemplate, ConversationBufferMemory, and GoogleGenerativeAIEmbeddings, facilitate the seamless integration of these tools.

Advanced AI Search Assistant

We will install and upgrade a comprehensive set of libraries essential for building an advanced AI search assistant. These libraries cover various aspects such as retrieval, LLM integration, data handling, visualization, and tokenization, forming the core foundation for a context-aware question-answering system.

Core Components

Key components imported include libraries for environment variables, secure input, time tracking, data science tools, and JSON parsing. These libraries provide the necessary functionalities for data handling, visualization, and structured data processing.

Secure Initialization

We will securely initialize API keys for Tavily and Google Gemini to ensure safe and repeatable access to external services. Additionally, a standardized logging setup using Python's logging module will be configured to monitor execution flow and capture debug or error messages.

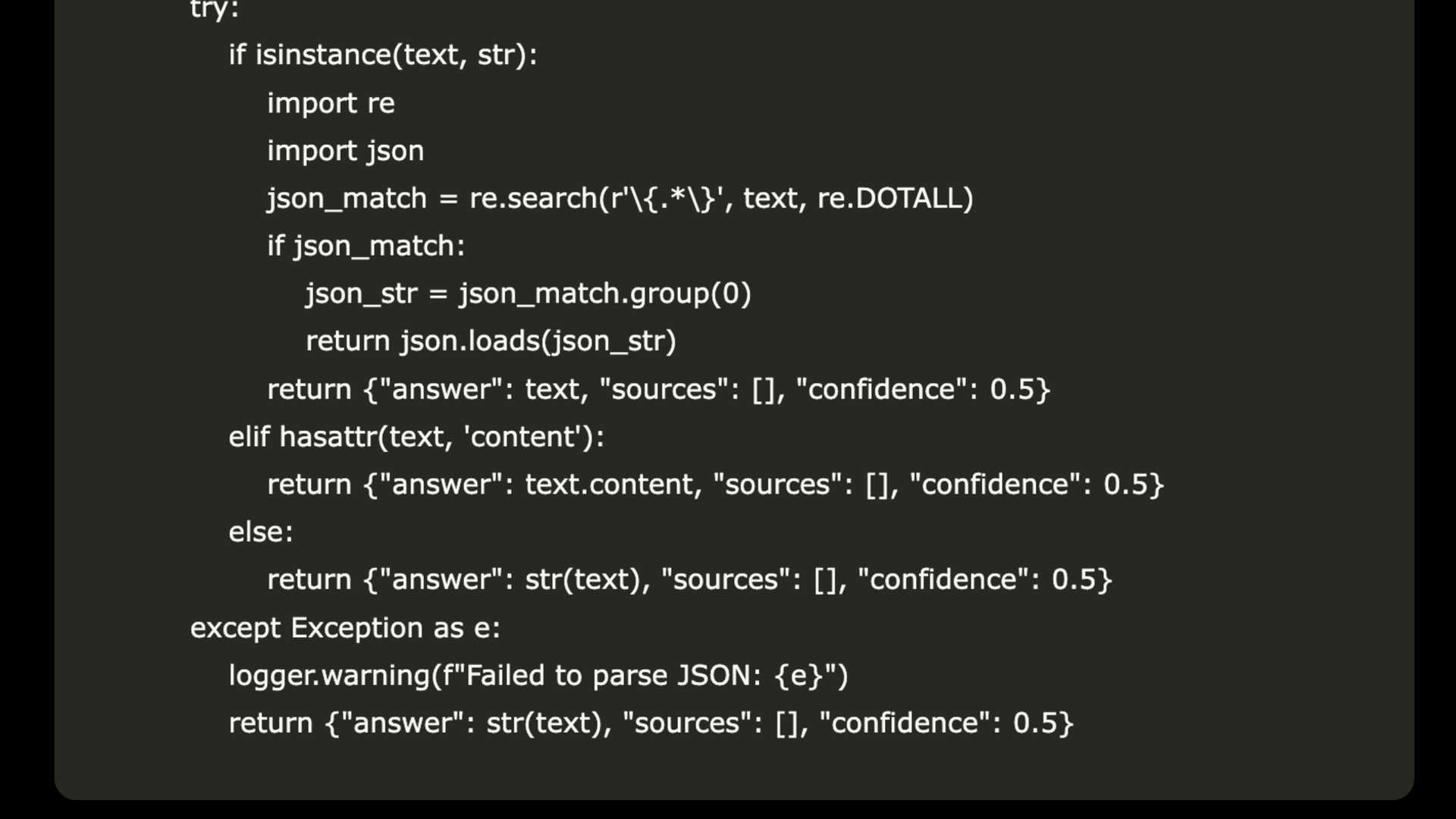

Essential Classes

The tutorial defines essential classes such as SearchQueryError for managing search query errors gracefully and SearchResultsParser for extracting structured information from LLM responses. These classes enhance the system's fault tolerance and ensure consistent downstream processing of responses.

Semantic Caching

A semantic caching layer, implemented using the SearchCache class, stores and retrieves documents using vector embeddings for efficient similarity search. This caching mechanism reduces redundant API calls and improves response times for related queries.

Intelligent Assistant Initialization

The core components of the AI assistant, including a semantic SearchCache, EnhancedTavilyRetriever, and ConversationBufferMemory, are initialized to create a structured prompt for guiding the LLM as a research assistant.

Enhanced Functions

Functions such as retrieve_with_fallback and summarize_documents enhance the assistant's intelligence and efficiency by implementing a hybrid retrieval mechanism and generating concise summaries from retrieved documents.

End-to-End Reasoning Workflow

The advanced_chain function defines a modular, end-to-end reasoning workflow for answering user queries using cached or real-time search. This workflow enables flexible experimentation with models and retrieval strategies while maintaining conversation coherence.

Final Components

The tutorial concludes with the initialization of the final components of the intelligent assistant, including the reasoning pipeline (qa_chain) and query analysis function (analyze_query). An example query is used to showcase the assistant's capabilities in full-stack inference and semantic interpretation.

Real-Time Demonstration

The tutorial demonstrates the complete pipeline in action, performing searches, analyzing queries, and visualizing search performance in real-time. The system's capabilities in bridging real-time web intelligence with conversational AI are showcased effectively.

Conclusion

In conclusion, following this tutorial provides users with a blueprint for creating a highly capable, context-aware, and scalable RAG system. The integration of tools like Tavily Search API, Gemini LLM, and LangChain framework enables the development of advanced features such as domain-specific filtering, query analysis, and fallback strategies, making the system suitable for real-world deployment.

Check out the Colab Notebook.