Israeli military develops ChatGPT-like AI tool to spy on Palestinians

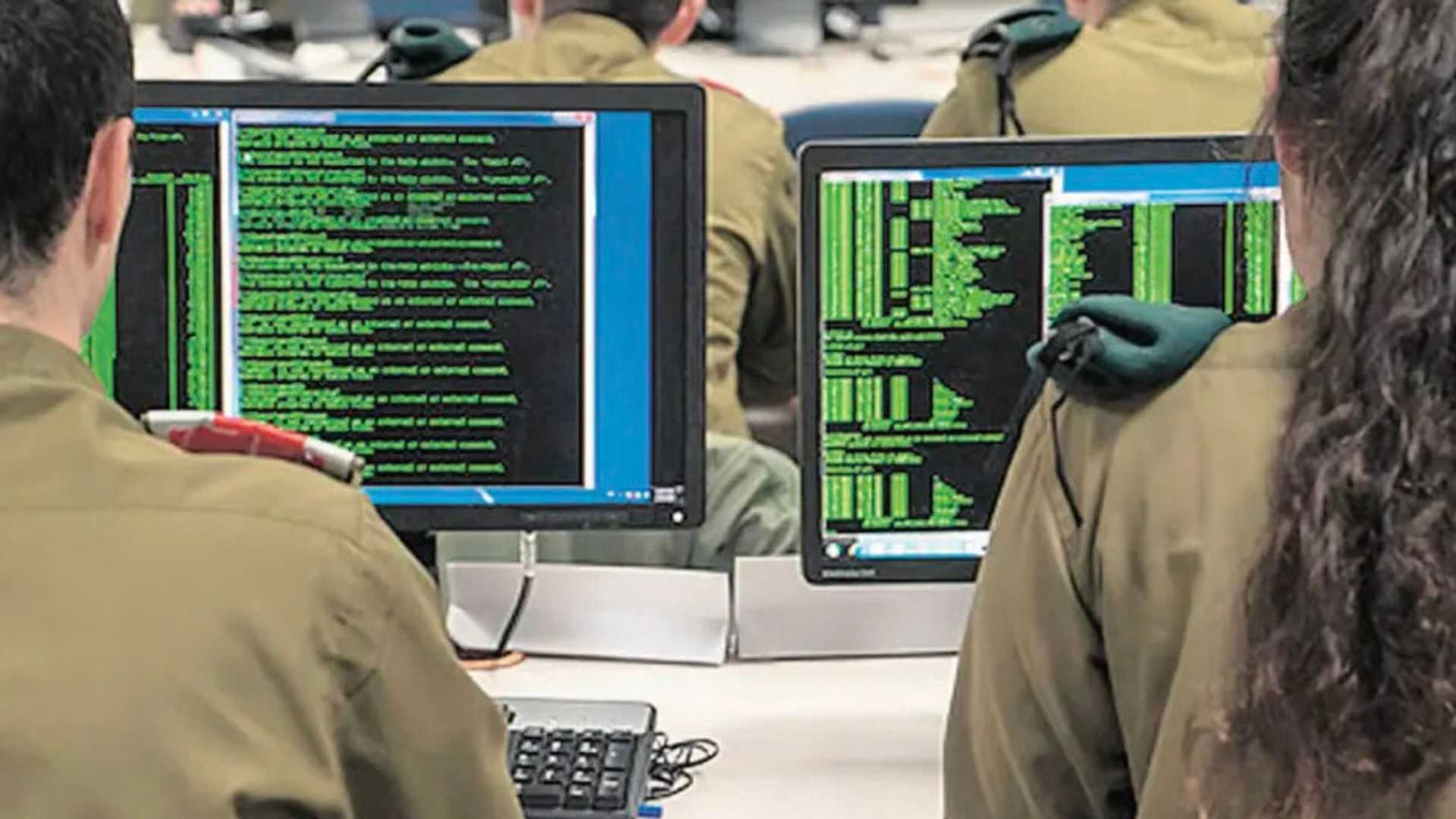

The Israeli military's electronic spying Unit 8200 has used a vast collection of intercepted communications to develop an artificial intelligence model similar to ChatGPT aimed at snooping on Palestinians, a new investigation reveals. The probe, whose findings were released on Thursday, was conducted by the British daily The Guardian, the Israeli-Palestinian publication +972 Magazine, and the Hebrew-language outlet Local Call.

Development of AI Model

Unit 8200 trained the AI model to understand spoken Arabic using large volumes of telephone conversations and text messages, obtained through its extensive surveillance of the occupied territories. The spying unit accelerated its development of the tool after the start of Israel's genocidal war on the Gaza Strip on October 7, 2023, and trained it in the second half of 2024. It is not clear whether the model has yet been deployed by the occupation's army.

Creation of Dataset

Chaked Roger Joseph Sayedoff, a former official, mentioned at a military AI conference in Tel Aviv that they aimed to create the largest dataset possible in Arabic, requiring "psychotic amounts" of data collection.

Capabilities of the AI Tool

Sources familiar with the project highlighted that the sophisticated chatbot-like tool can answer questions about people and provide insights into the massive volumes of surveillance data. It can be utilized to monitor various activities, including tracking human rights activists and Palestinian construction in the occupied West Bank.

Concerns and Criticisms

Nadim Nashif, director of 7amleh digital rights and activist group, expressed concerns that Palestinians are being used as subjects in Israel’s laboratory to develop surveillance techniques using AI for maintaining apartheid and occupation. Zach Campbell from Human Rights Watch criticized the use of surveillance material to train the AI model as invasive and incompatible with human rights.

Impact of AI Tools

During the Gaza onslaught, Israel utilized AI-powered tools like Gospel and Lavender to generate targets for airstrikes and assassinations. Despite the high number of casualties, Israel failed to achieve its objectives and accepted Hamas’s negotiation terms for a ceasefire.

For more news and updates, visit Press TV’s website at www.presstv.ir.