OpenAI ChatGPT Voice Update: Exclusive Access Begins Next Week

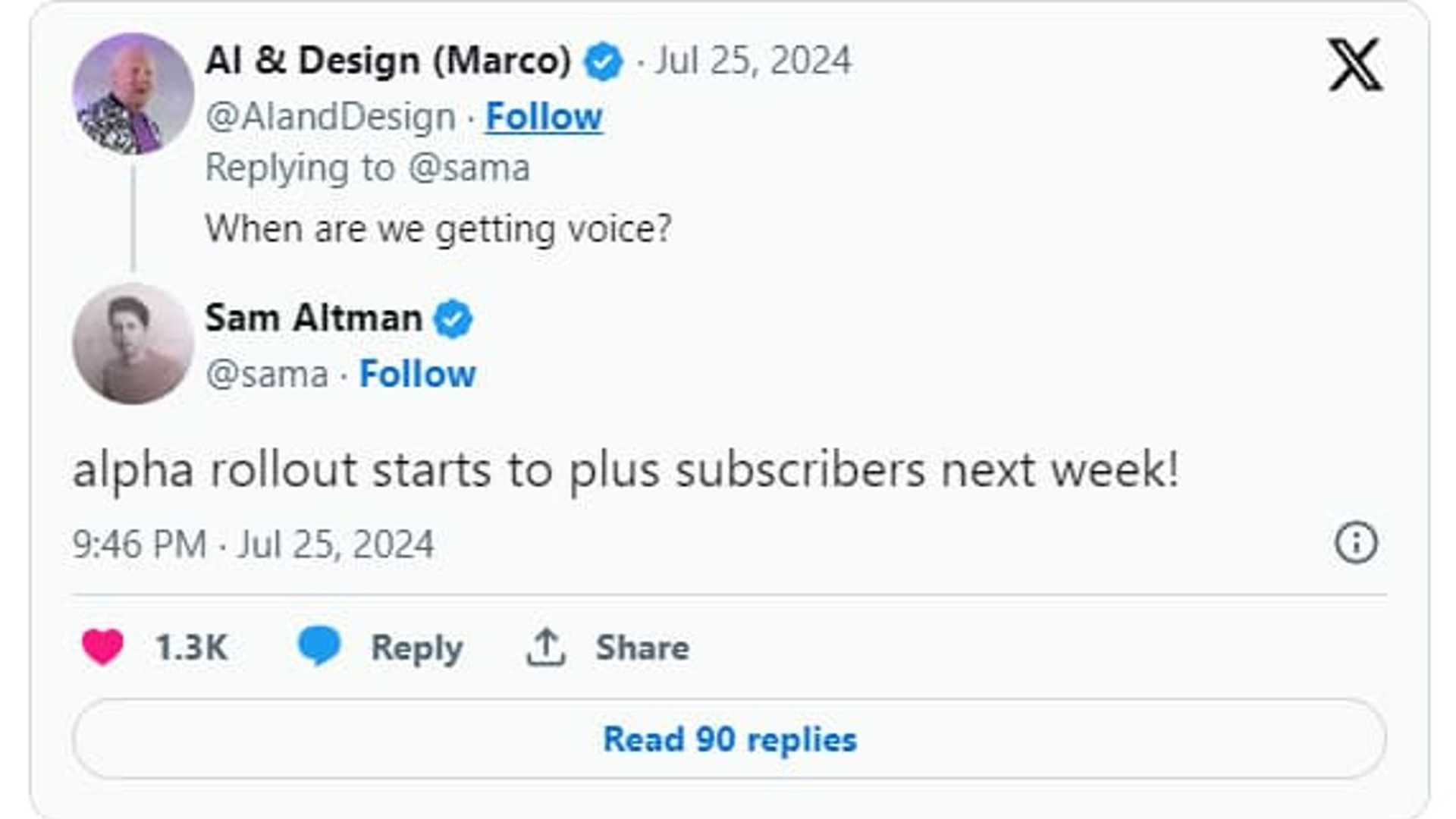

OpenAI is set to unveil an enhanced "Voice Mode" for its advanced GPT-4o model in ChatGPT, exclusively available to Plus subscribers. CEO Sam Altman recently confirmed on X (formerly Twitter) that this new feature will make its debut next week through a limited "alpha" release.

View the original tweet on X.com here.

GPT-4o Model Upgrade

The GPT-4o model was introduced in May, representing a significant enhancement to ChatGPT's voice interaction capabilities. While Voice Mode is currently accessible across all membership tiers, its functionalities have been restricted by certain limitations.

The current Voice Mode encounters latency issues, with response times averaging 2.8 seconds for GPT-3.5 and 5.4 seconds for GPT-4. This delay stems from a complex pipeline that involves separate models for audio transcription, text processing, and text-to-speech conversion. OpenAI recognizes that this method can result in substantial information loss for the core AI model.

Benefits of GPT-4o

GPT-4o addresses these challenges by utilizing a single neural network that is trained end-to-end on text, vision, and audio inputs and outputs. This integrated approach offers several advantages:

- Enhanced ability to handle interruptions

- Ability to manage group discussions

- Improved background noise filtering

- Adaptability to various tones

OpenAI also emphasizes GPT-4o's improved performance in handling diverse conversational scenarios and challenges.

Future of AI Interaction

The introduction of the upgraded Voice Mode for GPT-4o signifies a significant milestone in OpenAI's ongoing efforts to develop more intuitive and seamless AI interactions. As technology progresses, users can anticipate increasingly sophisticated and responsive AI communication experiences.

For more information about ChatGPT's upcoming Alpha voice mode, visit Chatgpt.com, and stay updated with the latest developments by following promptblueprints.tech.