Chat with Your Images Using Llama 3.2-Vision Multimodal LLMs

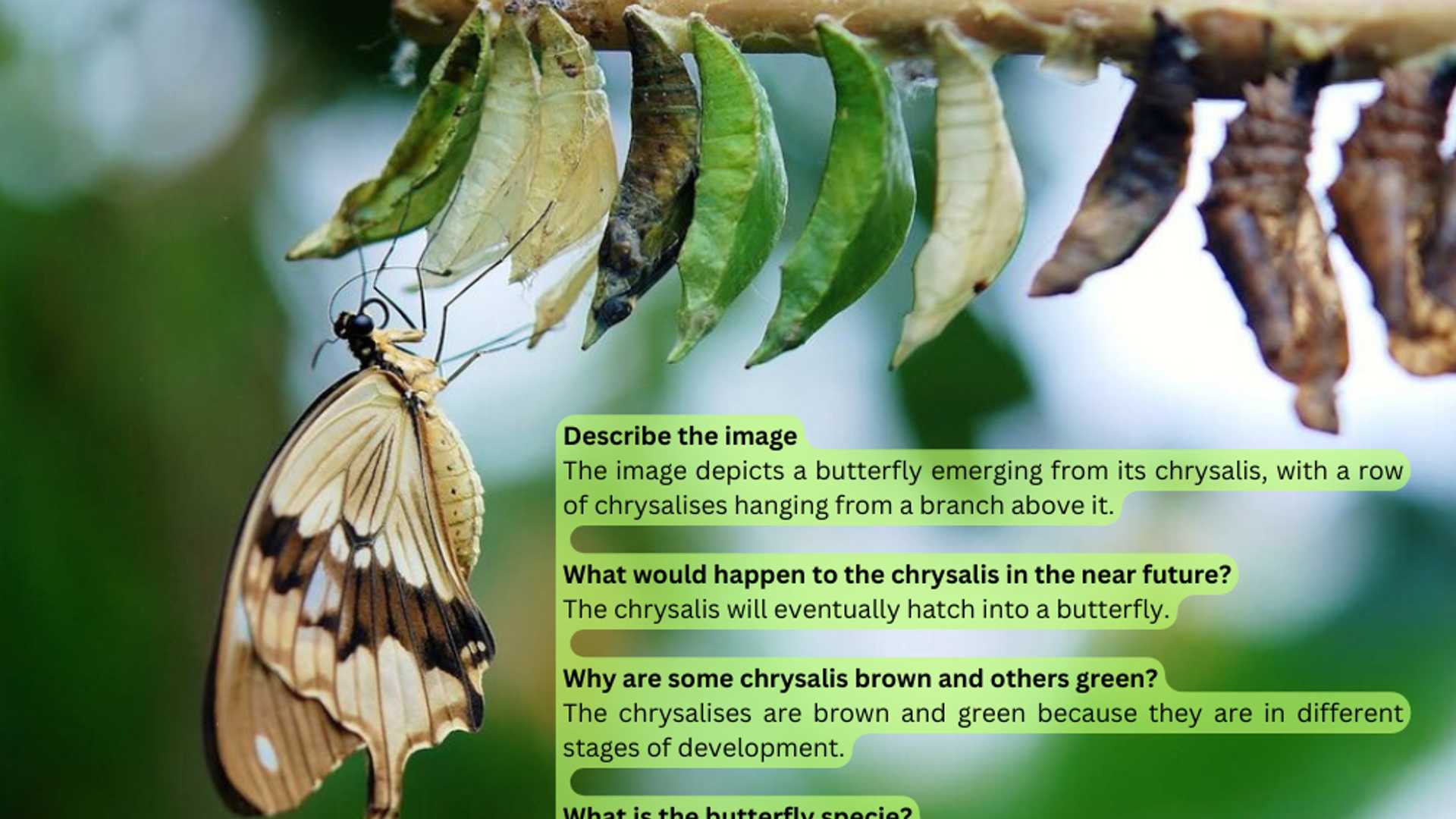

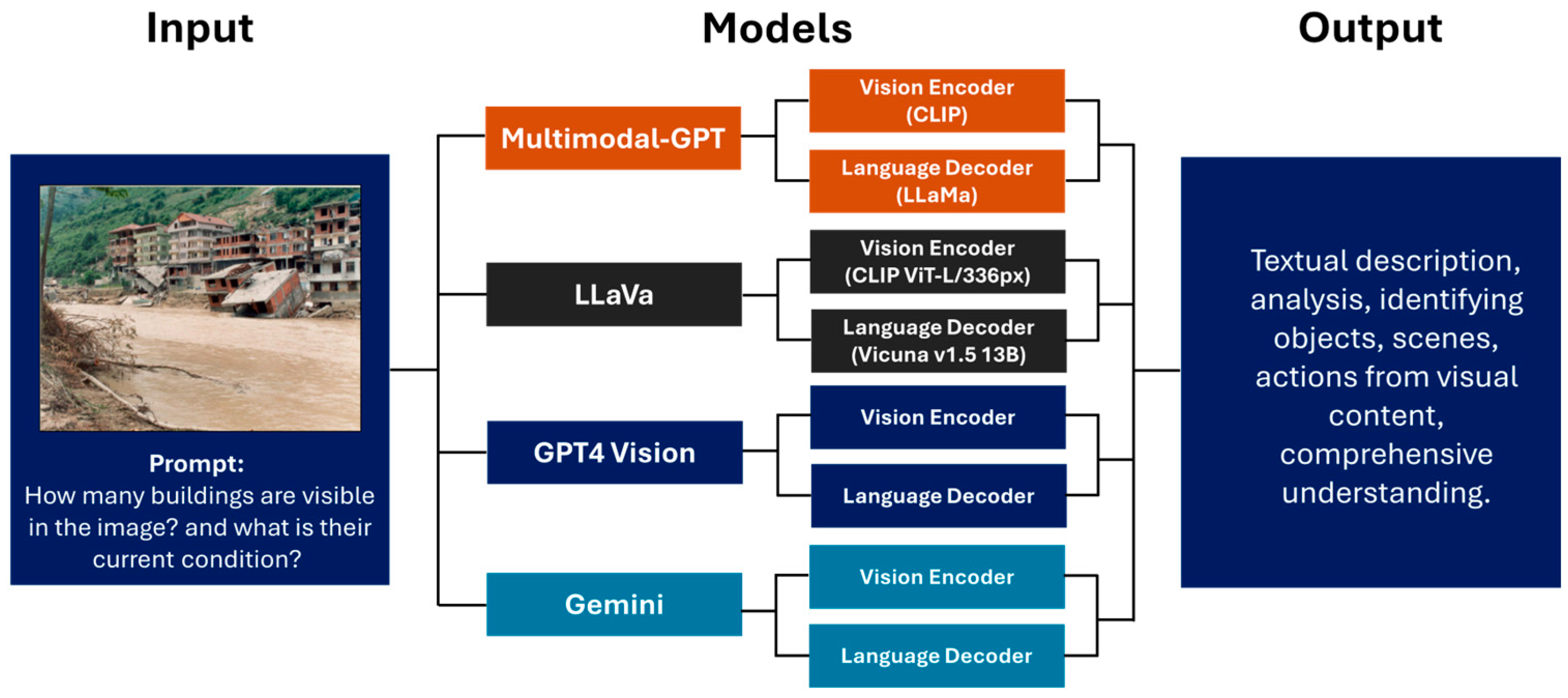

The integration of vision capabilities with Large Language Models (LLMs) is revolutionizing the computer vision field through multimodal LLMs (MLLM). These models combine text and visual inputs, showing impressive abilities in image understanding and reasoning. While these models were previously accessible only via APIs, recent open source options now allow for local execution, making them more appealing for production environments.

In this tutorial, we will learn how to chat with our images using the open source Llama 3.2-Vision model, and you’ll be amazed by its OCR, image understanding, and reasoning capabilities. All the code is conveniently provided in a handy Colab notebook.

Background

Llama, short for “Large Language Model Meta AI” is a series of advanced LLMs developed by Meta. Their latest, Llama 3.2, was introduced with advanced vision capabilities. The vision variant comes in two sizes: 11B and 90B parameters, enabling inference on edge devices. With a context window of up to 128k tokens and support for high resolution images up to 1120x1120 pixels, Llama 3.2 can process complex visual and textual information.

Architecture

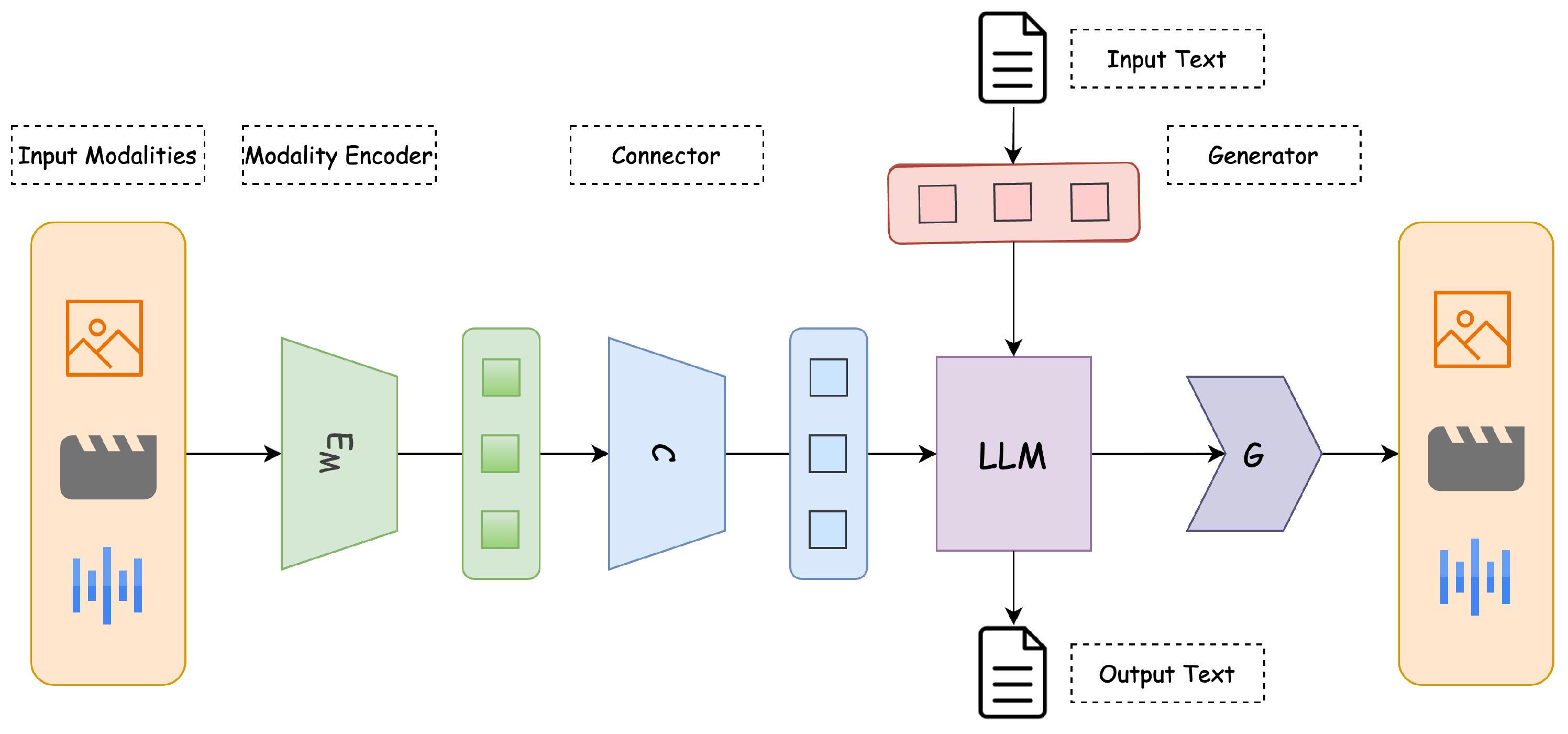

The Llama series of models are decoder-only Transformers. Llama 3.2-Vision is built on top of a pre-trained Llama 3.1 text-only model. It utilizes a standard, dense auto-regressive Transformer architecture, that does not deviate significantly from its predecessors, Llama and Llama 2.

To support visual tasks, Llama 3.2 extracts image representation vectors using a pre-trained vision encoder (ViT-H/14), and integrates these representations into the frozen language model using a vision adapter. The adapter consists of a series of cross-attention layers that allow the model to focus on specific parts of the image that correspond to the text being processed.

The adapter is trained on text-image pairs to align image representations with language representations. This design allows Llama 3.2 to excel in multimodal tasks while maintaining its strong text-only performance.

Loading The Model

Once we’ve set up the environment and acquired the necessary permissions, we will use the Hugging Face Transformers library to instantiate the model and its associated processor.

Expected Chat Template

Chat templates maintain context through conversation history by storing exchanges between the “user” and the “assistant”. The conversation history is structured as a list of dictionaries called messages, where each dictionary represents a single conversational turn, including both user and model responses.

Main function

For this tutorial, I provided a chat_with_mllm function that enables dynamic conversation with the Llama 3.2 MLLM. This function handles image loading, pre-processes both images and the text inputs, generates model responses, and manages the conversation history to enable chat-mode interactions.

Meme Image Example

In this example, I will show the model a meme I created myself, to assess Llama’s OCR capabilities and determine whether it understands my sense of humor.

As we can see, the model demonstrates great OCR abilities, and understands the meaning of the text in the image.

Want to learn more?

[0] Code on Colab Notebook: link

[1] The Llama 3 Herd of Models

[2] Llama 3.2 11B Vision Requirements

Your home for data science and AI. The world’s leading publication for data science, data analytics, data engineering, machine learning, and artificial intelligence professionals.

Deep Learning expert. Passionate about Computer Vision and Vision-Language models.