OpenAI: OpenAI's safety committee to oversee security practices as ...

OpenAI, a leading artificial intelligence research lab, has announced the formation of a safety committee to ensure the security practices of its advanced AI systems. This committee will play a crucial role in overseeing the policies and protocols related to the development and deployment of AI technologies.

The Role of OpenAI's Safety Committee

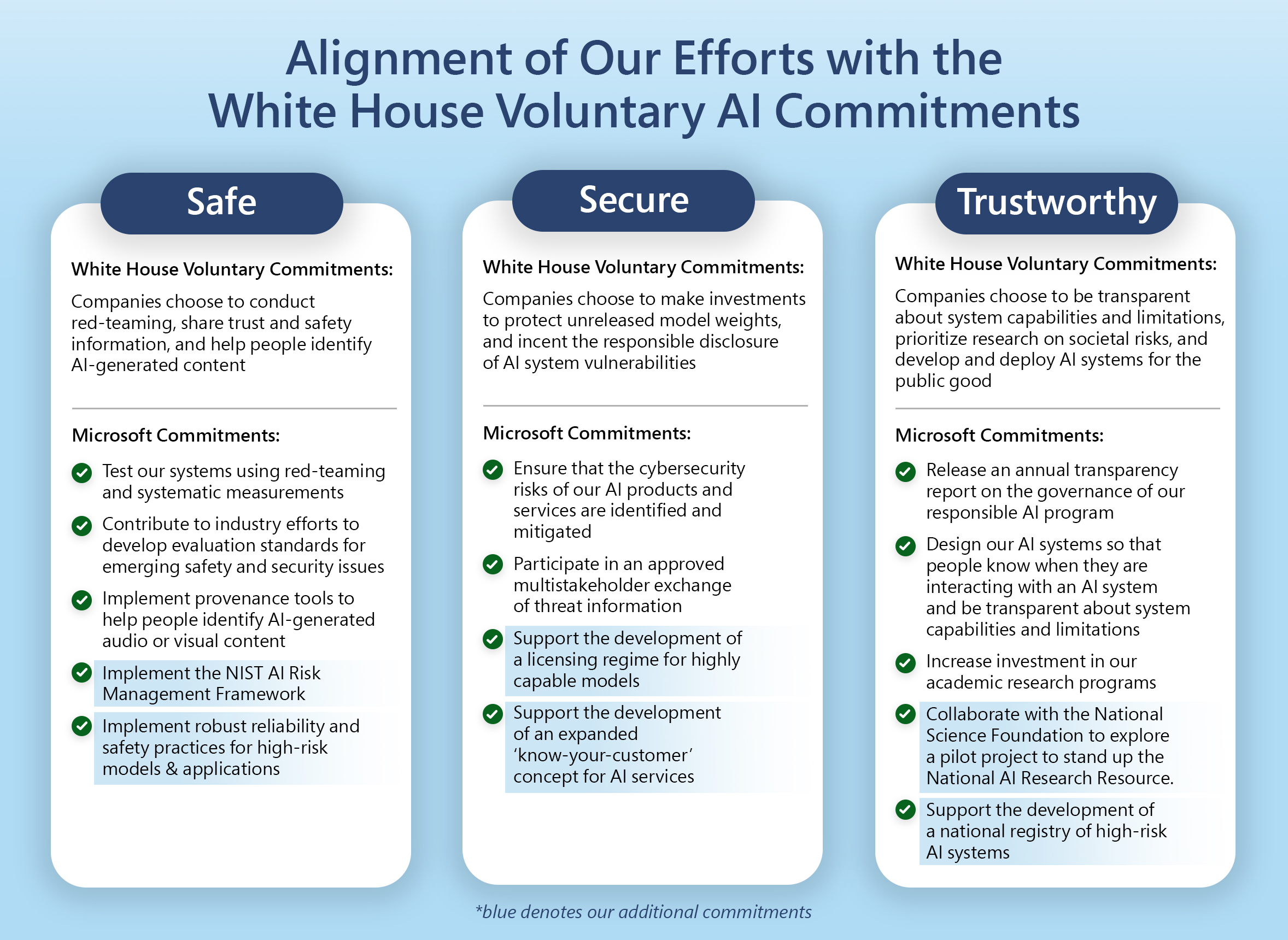

The safety committee at OpenAI will be responsible for evaluating the potential risks associated with AI systems and implementing measures to mitigate these risks. This includes assessing the impact of AI technologies on society, identifying ethical implications, and ensuring that the AI systems are aligned with the organization's values and principles.

Collaboration and Transparency

OpenAI places a strong emphasis on collaboration and transparency when it comes to AI research and development. The safety committee will work closely with researchers, engineers, and external stakeholders to address safety concerns and promote responsible AI innovation.

:max_bytes(150000):strip_icc()/GettyImages-2153471578-174bb4ee2450403c805eabfb8ee98041.jpg)

Transparency is also key to the committee's operations. OpenAI is committed to sharing information about its safety practices and engaging in open dialogue with the AI community to foster trust and accountability.

Building Trust in AI

By establishing a dedicated safety committee, OpenAI aims to build trust in AI technologies and demonstrate its commitment to ensuring the responsible and ethical use of artificial intelligence. The committee's oversight will help safeguard against potential risks and ensure that AI systems are designed and deployed in a manner that prioritizes safety and security.

Through proactive risk assessment, continuous monitoring, and robust governance processes, OpenAI's safety committee sets a new standard for AI safety practices in the industry.