Google's Gemini 1.5 Pro Will Have 2 Million Tokens. Here's What ...

No, not bus or arcade-game tokens. This form of token refers to the building blocks used by artificial intelligence systems.

Google Expands Context Window in Gemini 1.5 Pro

Google is expanding its context window in Gemini 1.5 Pro to 2 million tokens for developers in private preview. In the world of large language models, the tech underpinning generative AI, size matters. And Google said it's allowing users to feed its Gemini 1.5 Pro model more data than ever.

Increased Context Window

During the Google I/O developers conference on Tuesday, Alphabet CEO Sundar Pichai said Google is increasing Gemini 1.5 Pro's context window from 1 million to 2 million tokens. Pichai said the update will be made available to developers in "private preview," but stopped short of saying when it may be available more broadly.

"It's amazing to look back and see just how much progress we've made in a few months," Pichai said after announcing that Google is doubling Gemini 1.5 Pro's context window. "And this represents the next step on our journey towards the ultimate goal of infinite context."

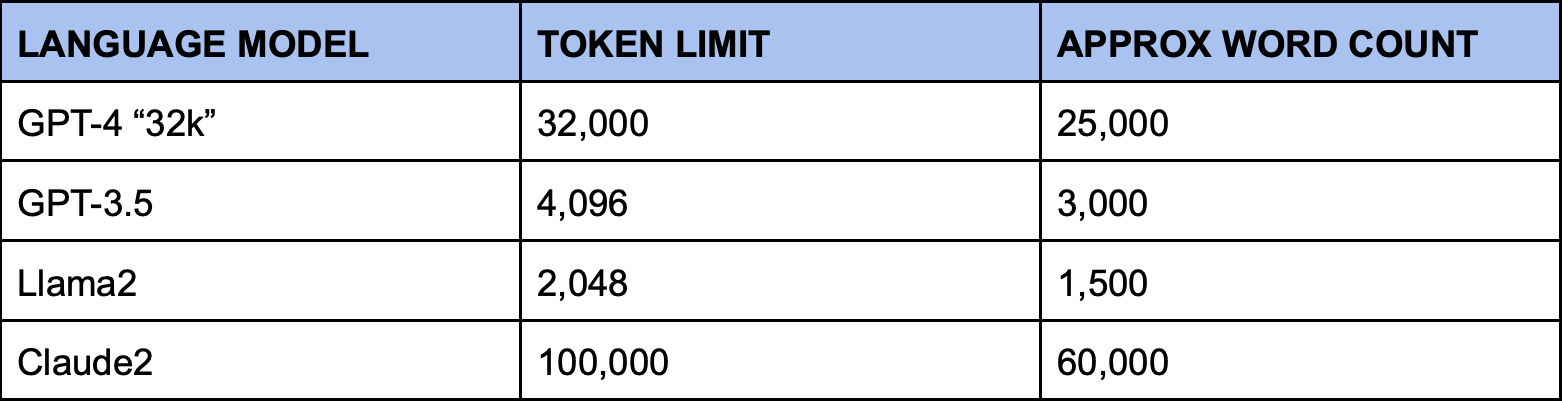

Understanding Tokens in AI

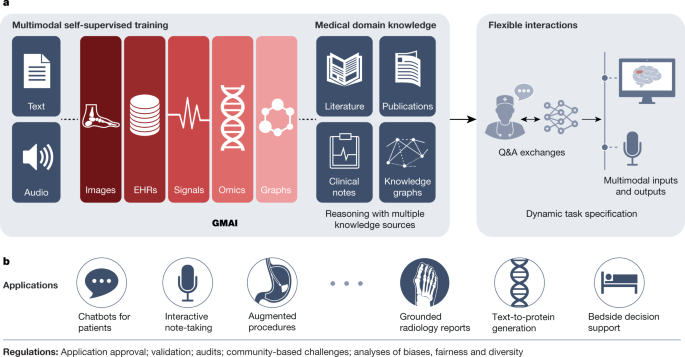

Large language models, or LLMs like Gemini 1.5 Pro, are AI models that are trained on enormous amounts of data to understand language so that tools like Gemini can generate content that humans can understand.

As AI models add the ability to analyze images, video, and audio, they similarly use tokens to get the full picture. Tokens are used both as inputs and outputs, enabling AI models to process queries and deliver responses effectively.

Importance of Context Window

No conversation about tokens is complete without explaining the context window. Context windows help AI models remember information and reuse it to deliver better results to users. The larger the context windows, the better the results as more tokens can be used in a dialogue.

Google's updated context window on Gemini 1.5 Pro is a significant development, offering developers access to a more robust AI model. The increase from 1 million to 2 million tokens could greatly enhance the capabilities and performance of Google's LLM.

While the concept of "infinite context" remains a futuristic goal, the advancements in token availability are paving the way for more sophisticated AI models. As technology progresses, we can expect further enhancements in context windows from various providers, ultimately improving the user experience and outcomes.