Google I/o 2024: Gemini 1.5 Pro Gets Big Upgrade as New Flash and Gemma AI Models Unveiled

Google recently held the keynote session of its annual developer-focused Google I/O event. During the session, the focus was on new developments in artificial intelligence (AI). The tech giant introduced various new AI models and features for the existing infrastructure. A significant announcement was the introduction of a two million token context window for Gemini 1.5 Pro, now available for developers.

Key Highlights

CEO Sundar Pichai kickstarted the event with a major announcement - the availability of a two million token context window for Gemini 1.5 Pro. Initially, a one million token context window was introduced earlier this year, limited to developers. Google has now made it generally available for public preview through Google AI Studio and Vertex AI. The two million token context window is exclusively accessible via a waitlist for developers using the API and Google Cloud customers.

With a context window of two million, Google claims that the AI model can process significant amounts of data in one go. This includes processing two hours of video, 22 hours of audio, over 60,000 lines of code, or more than 1.4 million words. The tech giant has also enhanced Gemini 1.5 Pro's capabilities in code generation, logical reasoning, planning, multi-turn conversation, as well as understanding of images and audio. The AI model will also be integrated into Gemini Advanced and Workspace apps.

New AI Models

Google also introduced a new addition to its Gemini AI models - Gemini 1.5 Flash. This lightweight model is designed to be faster, more responsive, and cost-efficient. While it may not excel in solving complex tasks, it is proficient in tasks such as summarization, chat applications, image and video captioning, data extraction from long documents and tables, and more.

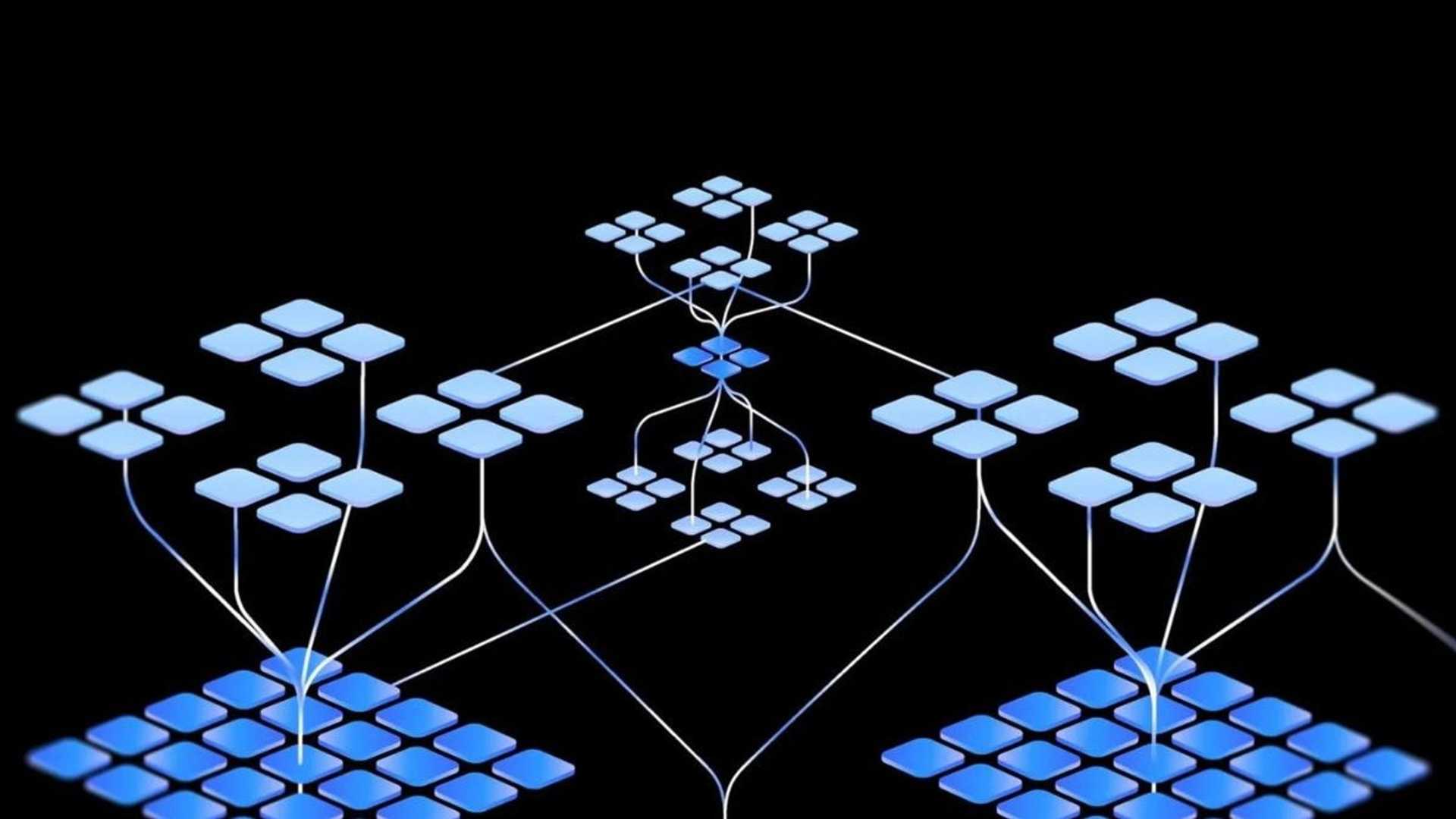

Additionally, the tech giant unveiled the next generation of its smaller AI models, Gemma 2. With 27 billion parameters, Gemma 2 can efficiently run on GPUs or a single TPU. Google claims that Gemma 2 outperforms models twice its size. Benchmark scores for the model are yet to be released.

Sign in to your account to get more updates and insights!