Google Boosts LiteRT and Gemini Nano for On-Device AI Efficiency

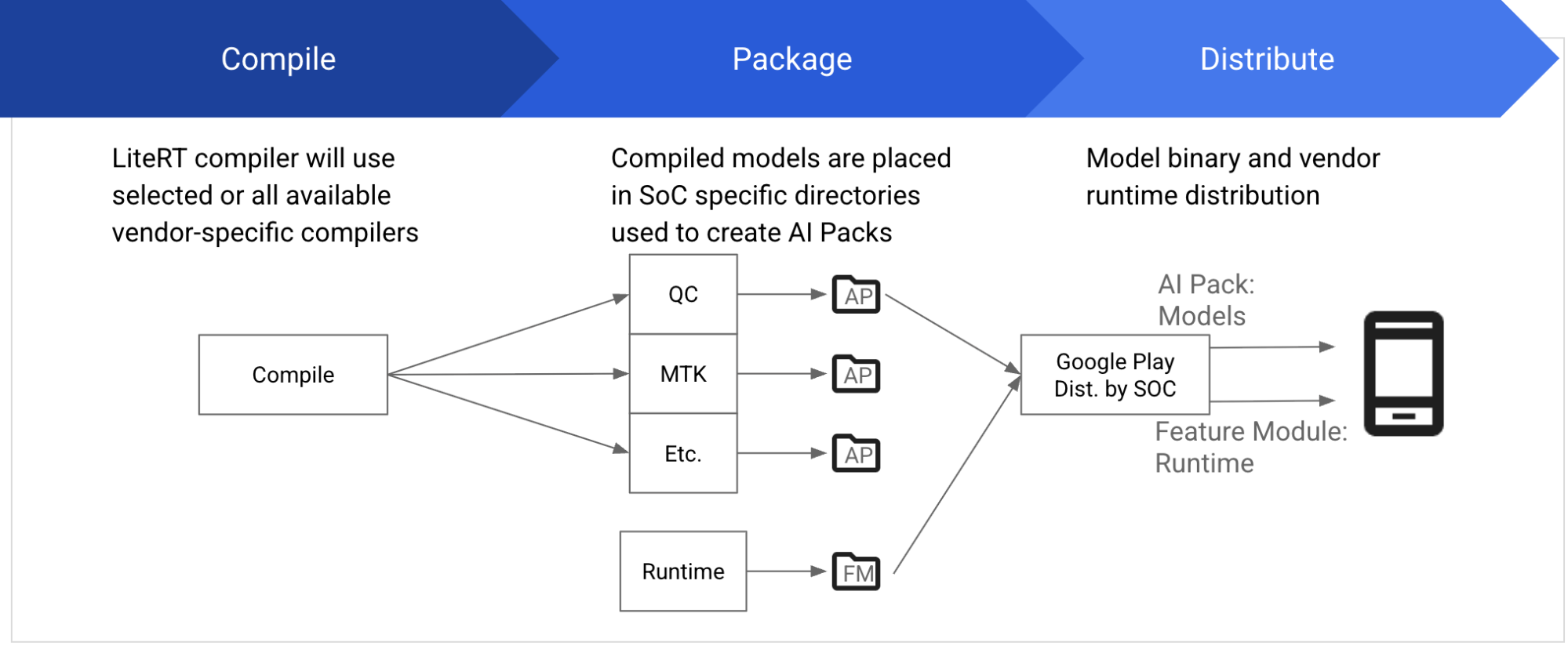

The new release of LiteRT, previously known as TensorFlow Lite, introduces a streamlined API that simplifies on-device machine learning (ML) inference, enhanced GPU acceleration, and support for Qualcomm NPU (Neural Processing Unit) accelerators. This is aimed at making it easier for developers to utilize GPU and NPU acceleration without needing specific APIs or vendor SDKs.

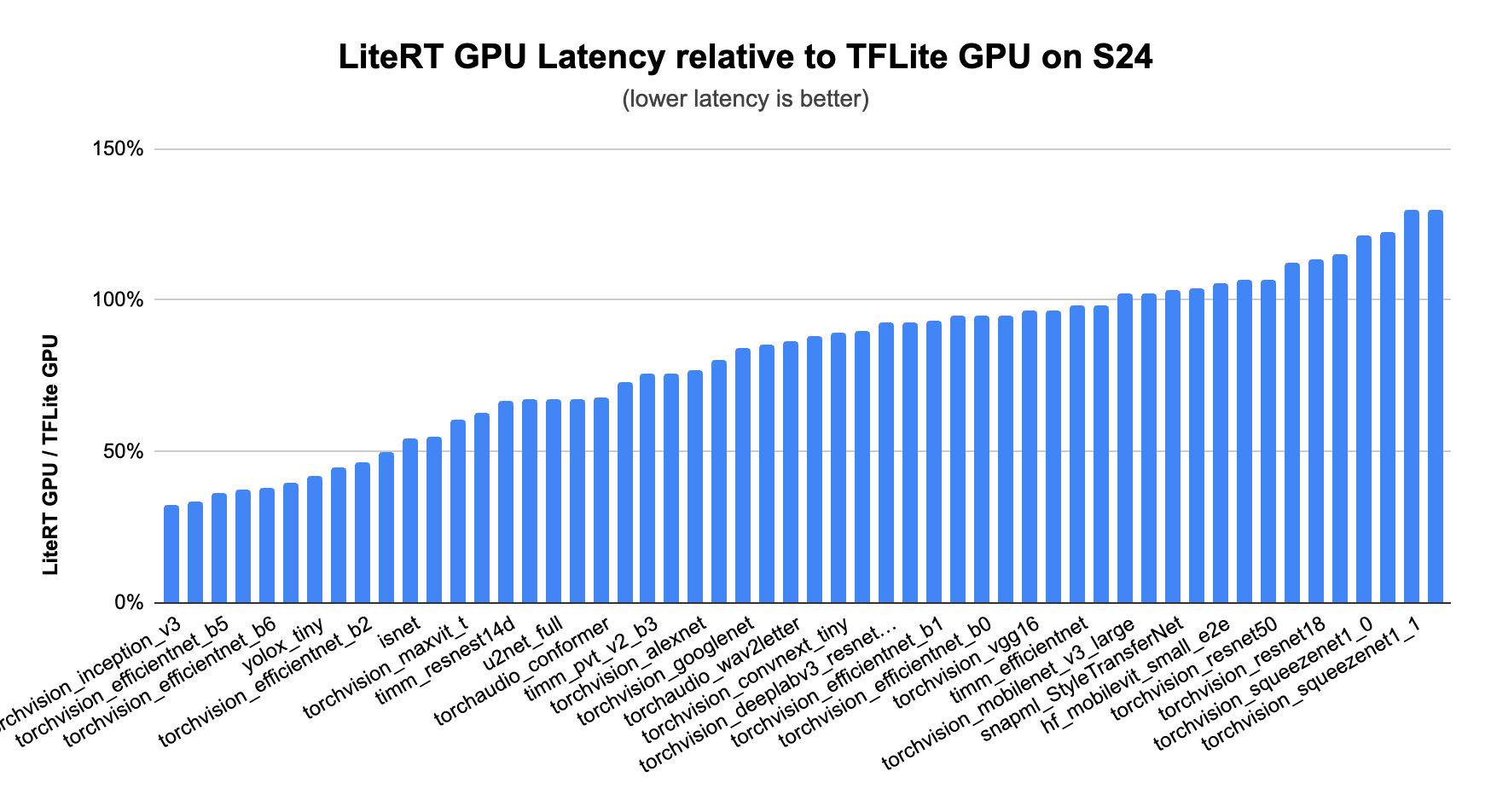

The LiteRT release features MLDrift, a new GPU acceleration implementation that offers more efficient tensor-based data organization, context-aware smart computations, and optimized data transfer. This results in significantly faster performance for CNN and Transformer models compared to CPUs and even previous versions of TFLite’s GPU delegate.

LiteRT can be downloaded from GitHub and includes sample apps that demonstrate its capabilities.

The latest LiteRT APIs have significantly simplified the setup for using GPUs and NPUs. Developers can specify their target backend with ease. For example, creating a compiled model targeting GPU can be done with just a few lines of code.

This ease of use is complemented by features that enhance inference performance, particularly in memory-constrained environments. The TensorBuffer API allows for direct utilization of data residing in hardware memory, which minimizes CPU overhead and maximizes performance.

Developers interested in these updates can refer to the documentation for a comprehensive overview. This move is seen as vital for fostering a more consistent mobile AI experience, enabling capabilities that previously required substantial cloud resources.

Enhanced MLDrift Implementation

LiteRT now supports NPUs, potentially delivering up to 25x faster performance than CPUs while consuming one-fifth of the power. LiteRT adds support for Qualcomm and MediaTek's NPUs to address integration challenges of these accelerators, making it easier to deploy models for vision, audio, and NLP tasks.

The asynchronous execution feature enables concurrent processing across CPU, GPU, and NPUs. This parallel processing capability enhances efficiency and responsiveness, crucial for real-time AI applications.

Expansion of On-Device Small Language Models

Google AI Edge has expanded support for on-device small language models (SLMs) with the introduction of over a dozen models, including the new Gemma 3 and Gemma 3n models. These models support text, image, video, and audio inputs, allowing for broader application of generative AI.

Introduction of Gemini Nano for On-Device AI

Google is set to announce new APIs for ML Kit that will enable developers to utilize the capabilities of Gemini Nano for on-device AI. This update aims to simplify the integration of generative AI features within applications, allowing for functionalities such as summarization, proofreading, and image description without the need to send data to the cloud.