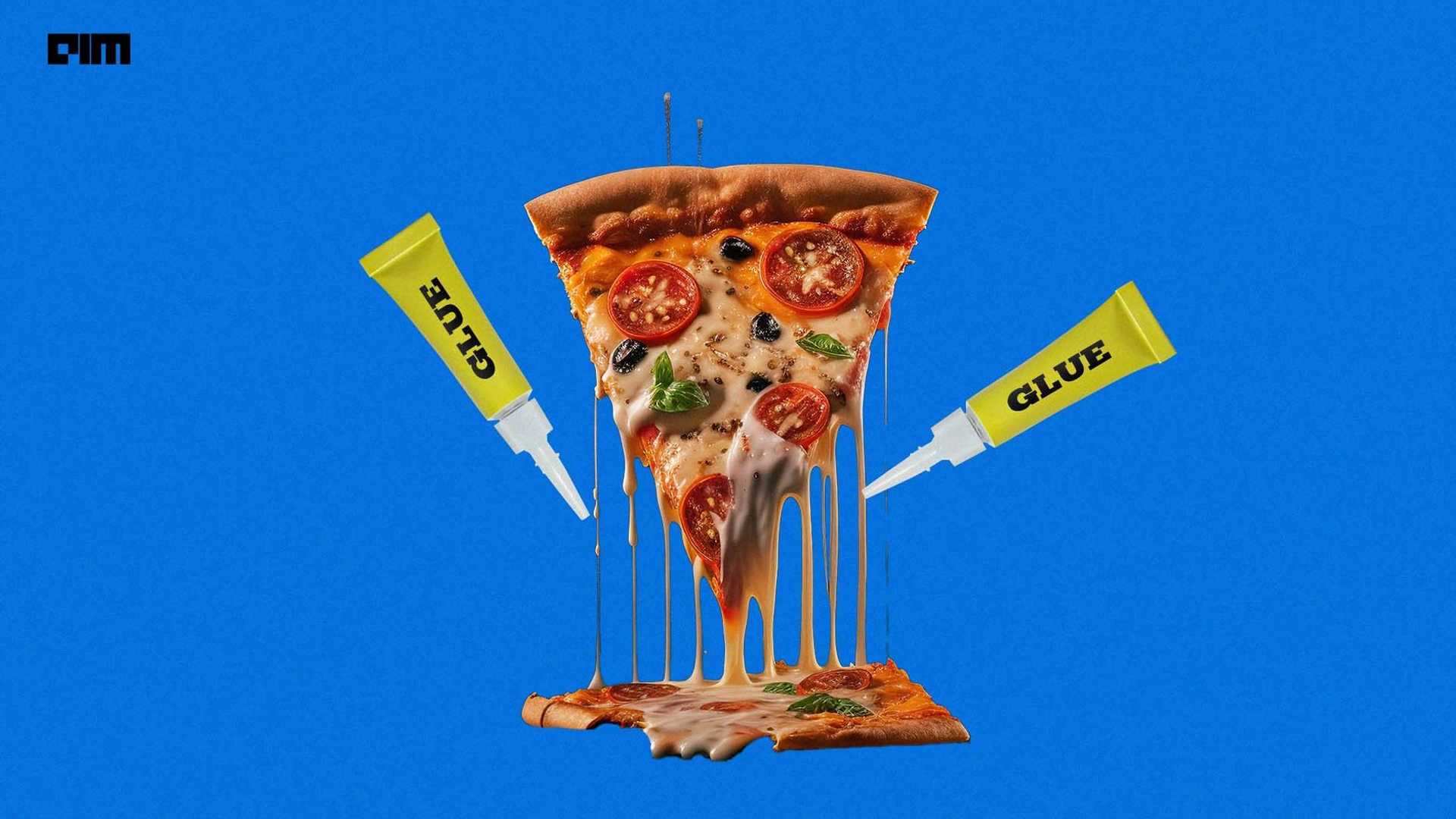

Google AI Overview's Suggestion of Adding Glue to Pizza Says a Lot ...

Google AI Overview's latest response to the issue of cheese not sticking to pizza has sparked significant interest and debate across the internet. This incident has led many to question the capabilities of its generative AI search experience. This is not the first time Google's AI has made waves in this space. During the early testing phase known as the Search Generative Experience (SGE), users were prompted with tasks like 'drink a couple of litres of light-coloured urine in order to pass kidney stones.'

One of the notable controversies involved Gemini, where Google faced criticism from Indian IT Minister Rajeev Chandrasekhar for expressing a biased opinion against Indian Prime Minister Narendra Modi. Furthermore, there was a temporary suspension of image-generating features due to inaccuracies in depicting people of colour in Nazi-era uniforms, which led to historically inaccurate and insensitive visuals.

Insights from German AI Cognitive Scientist

German AI cognitive scientist, Joscha Bach, shed light on the implications of Google Gemini during a recent discussion at the AICamp Boston meetup. He emphasized how the biases within the system can lead to inaccurate results and outputs, showcasing societal prejudices. Bach highlighted that the biases observed in Gemini were not explicitly programmed but inferred through interactions and prompts within the system.![]()

He pointed out that these biases were cultivated through the input received by the system, influencing its opinions on various issues. Bach suggested that these AI models could serve as a mirror reflecting societal opinions and behaviors, urging a deeper examination of our societal landscape.

Addressing Misinformation and Societal Biases

AIM stressed the role of human inputs in perpetuating misinformation, emphasizing that the responsibility lies with content creators, media platforms, and users rather than solely on AI technology. OpenAI's collaborations with media agencies aim to tackle misinformation by leveraging AI capabilities.

AI hallucinations, a common feature in auto-regressive language models (LLMs), have been a subject of discussion among industry experts. While some view them as a creative aspect, others see them as potential obstacles in providing accurate responses. Various approaches, including vector databases and advanced techniques like CoVe, RAPTOR, and knowledge graph integration, are being explored to reduce LLM hallucinations.

The Future of AI-generated Content

With advancements like vector SQL and other emerging techniques, the potential for hallucination-free LLMs is within reach. These innovations offer more reliable and accurate AI-generated content, paving the way for a more informed and trustworthy AI landscape.

Join the Data Engineering Summit 2024 to stay at the forefront of data innovation and witness the evolution of technology's future.