Vercel and OpenAI: Not a Perfect Match

Vercel is a fantastic platform for quickly launching small projects for free. However, integrating AI services like OpenAI with Vercel can lead to unexpected issues that only surface during deployment. Fixing these errors can be time-consuming and challenging.

Before diving into the solution, here is a quick checklist to avoid Vercel's Gateway Timeout problems when working with OpenAI:

- The endpoint

/request/initiateis responsible for starting a new request. It involves taking a user's message, generating a unique hash, and checking the database for existing requests. If a request is found, the endpoint informs the user of its status. If it's a new request, it saves it as 'pending' and initiates background processing. - The

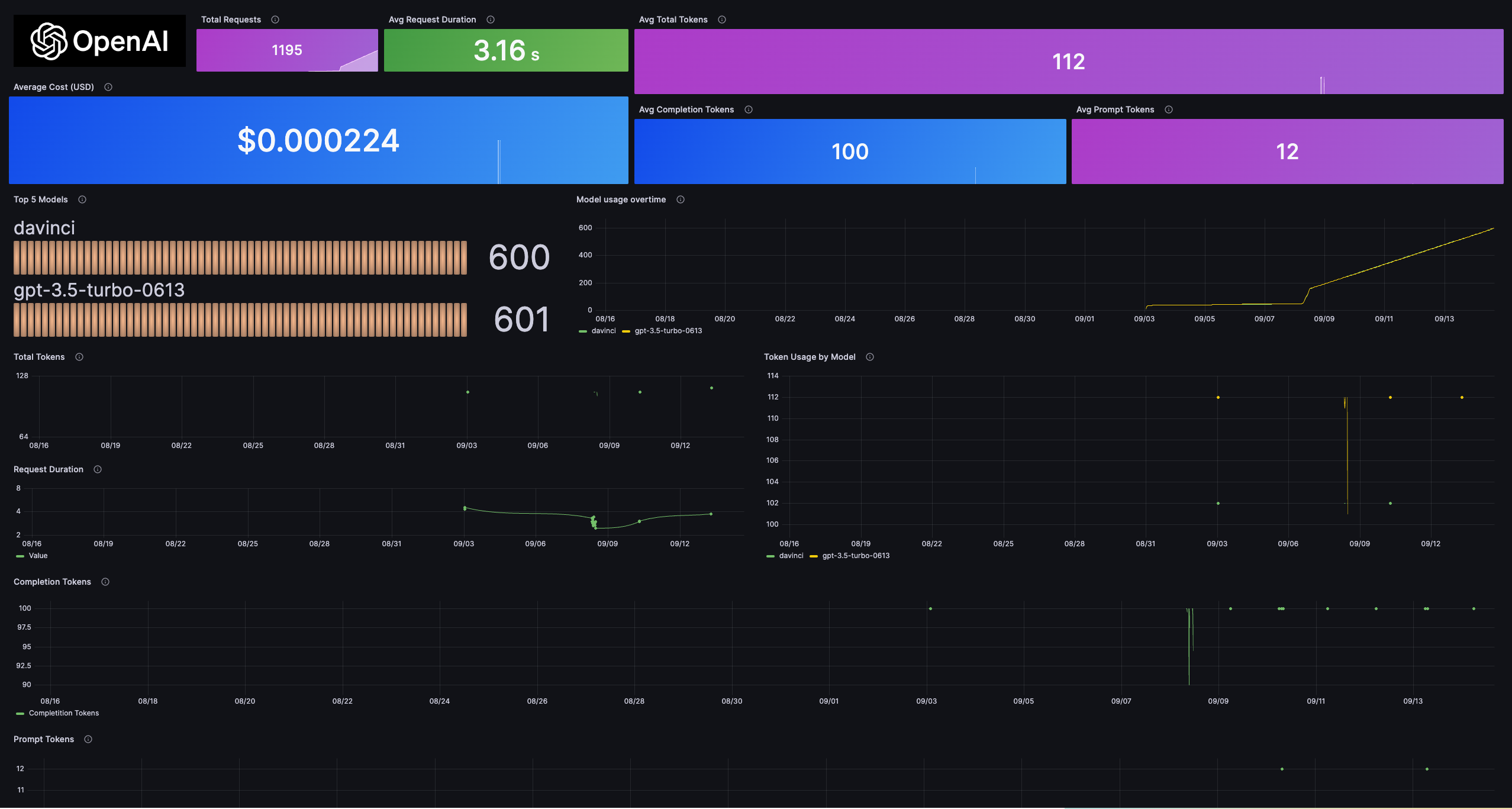

processRequestInBackgroundfunction manages the request processing. It communicates with the OpenAI API, updates the database with the result, and records the content and tokens used upon successful completion. In case of an error, it updates the status and logs the error. - The endpoint

/request/status/:hashis used to check the status of a specific request. It returns the current status, content, and tokens used. If no record is found, it provides feedback to the user.

Join Our Open-Source Community at Webcrumbs

If you are passionate about JavaScript and interested in joining our open-source community at Webcrumbs, make sure to check out our platforms:

Follow us for more insightful posts and updates. See you soon!