AI #109: Google Fails Marketing Forever - by Zvi Mowshowitz

What if they released the new best LLM, and almost no one noticed? Google seems to have pulled that off this week with Gemini 2.5 Pro. It’s a great model, with a ton of positive reactions, but unfortunately, it seems like no one really paid attention to it. Instead, everyone got hold of the GPT-4o image generator and went Ghibli crazy. I love that for us, but we did kind of bury the lede.

We also buried everything else. Did you know Claude now has web search? It’s kind of a big deal and a remarkable quality of life improvement. Gemini Pro 2.5? Never heard of her. One big thread or many new ones? Language models offer mundane utility. Language models don’t offer mundane utility. Every hero has a code. Huh, Upgrades. Claude has web search and a new ‘think’ cool, DS drops new v3. On Your Marks. Number continues to go up. Copyright Confrontation. Meta did the crime, is unlikely to do the time. Choose Your Fighter. For those still doing actual work, as in deep research. Deepfaketown and Botpocalypse Soon. The code word is *********. They Took Our Jobs. I’m Claude, and I’d like to talk to you about buying Claude. The Art of the Jailbreak. You too would be easy to hack with limitless attempts. Get Involved. Grey Swan, NIST is setting standards, two summer programs. Introducing. Some things I wouldn’t much notice even in a normal week, frankly. In Other AI News. Someone is getting fired over this. Oh No What Are We Going to Do. The mistake of taking Balaji seriously. Quiet Speculations. Realistic and unrealistic expectations. Fully Automated AI R&D Is All You Need. IAPS Has Some Suggestions. The Quest for Sane Regulations. Dean Ball proposes a win-win trade. We The People. The people continue to not care for AI, but not yet much care. The Week in Audio. Rhetorical Innovation. Aligning a Smarter Than Human Intelligence is Difficult. People Are Worried About AI Killing Everyone. Elon Musk, a bit distracted. Fun With Image Generation. Hey, We Do Image Generation Too. Forgot about Reve, and about Ideogram. The Lighter Side. Your outie reads many words on the internet.

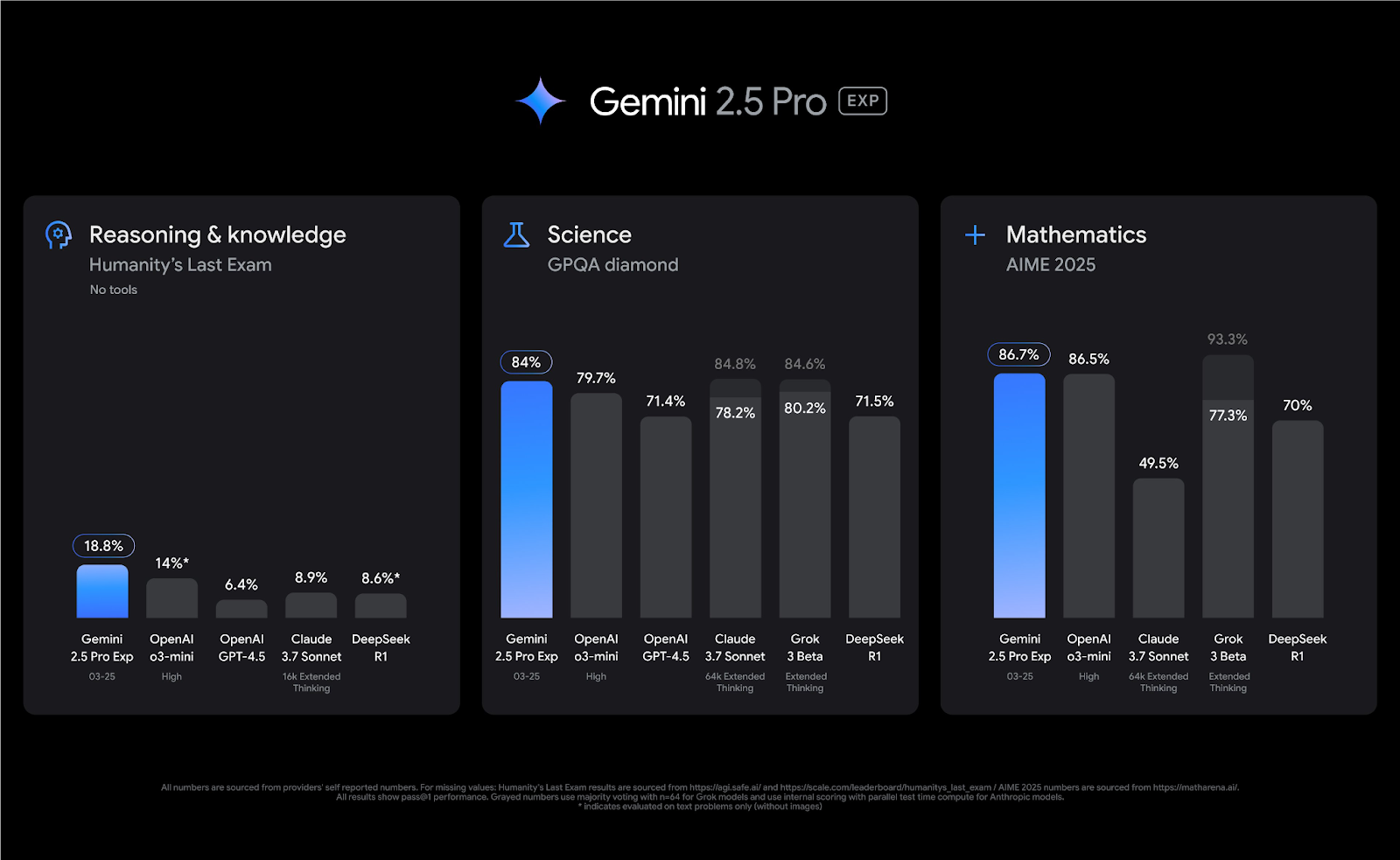

Gemini 2.5 Pro: Marketing Failures

I swear that I put this in as a new recurring section before Gemini 2.5 Pro. Now Gemini 2.5 has come out, and everyone has universal positive feedback on it, but unless I actively ask about it no one seems to care. Given the circumstances, I’m running this section up top, in the hopes that someone decides to maybe give a damn. As in, I seem to be the Google marketing department. Gemini 2.5 post is coming on either Friday or Monday, we’ll see how the timing works out. That’s what it means to Fail Marketing Forever.

Failures in Marketing

Failing marketing includes:

- Making their models scolds that are no fun to talk to and that will refuse queries.

- No one knowing that Google has good models.

- Calling the release ‘experimental’ and hiding it behind confusing subscriptions and products.

This is an Arena chart, but still, it was kind of crazy, ya know? And this was before Gemini 2.5, which is now atop the Arena by ~40 points.

Swyx: ...so i use images instead. look at how uniform the pareto curves of every frontier lab is.... and then look at Gemini 2.0 Flash. @GoogleDeepMind is highkey goated and this is just in text chat. In native image chat it is in a category of its own.

And that’s with the ‘Gemini is no fun’ penalty. Imagine if Gemini was also fun. There’s also the failure to create ‘g1’ based off Gemma 3. That failure is plausibly a national security issue. Even today people thinking r1 is ‘ahead’ in some sense is still causing widespread both adaptation and freaking out in response to r1, in ways that are completely unnecessary. Can we please fix? Google could also cook to help address… other national security issues. But I digress.

Find new uses for existing drugs, in some cases this is already saving lives. ‘Alpha School’ claims to be using AI tutors to get classes in the top 2% of the country. Students spend two hours a day with an AI assistant and the rest of the day to ‘focus on skills like public speaking, financial literacy and teamwork.’

My reaction was beware selection effects. Reid Hoffman’s was: Obvious joke aside, I do think AI has the amazing potential to transform education for the vastly better, but I think Reid is importantly wrong for four reasons:

- Alpha School is a luxury good in multiple ways that won’t scale in current form.

- Alpha School is selecting for parents and students, you can’t scale that either.

- A lot of the goods sold here are the ‘top 2%’ as a positional good.

- The teachers unions and other regulatory barriers won’t let this happen soon.

David Perell offers AI-related writing advice, 90 minute video at the link. Based on the write-up: He’s bullish on writers using AI to write with them, but not those who have it write for them or who do ‘utilitarian writing,’ and thinks writers largely are hiding their AI methods to avoid disapproval. And he’s quite bullish on AI as an editor. Mostly seems fine but overhyped?

LLM Conversations and Efficiency

Should you be constantly starting new LLM conversations, have one giant one, or do something in between? Andrej Karpathy looks at this partly as an efficiency problem, where extra tokens impact speed, cost and signal to noise.

He also notes it is a training problem, most training data especially in fine tuning will of necessity be short length so you’re going out of distribution in long conversations, and it’s impossible to even say what the optimal responses would be. I notice the alignment implications aren’t great either, including in practice, where long context conversations often are de facto jailbreaks or transformations even if there was no such intent.

Andrej Karpathy: Certainly, it's not clear if an LLM should have a "New Conversation" button at all in the long run. It feels a bit like an internal implementation detail that is surfaced to the user for developer convenience and for the time being. And that the right solution is a very well-implemented memory feature, along the lines of active, agentic context management. Something I haven't really seen at all so far.

Anyway curious to poll if people have tried One Thread and what the word is.

I like Dan Calle’s answer of essentially projects - long threads each dedicated to a particular topic or context, such as a thread on nutrition or building a Linux box. That way, you can sort the context you want from the context you don’t want. And then active management of whether to keep or delete even threads, to avoid cluttering context. And also Owl’s: if they take away my ability to start a fresh thread I will riot.